# Dataset

Level Navi Agent Search

Level-Navi Agent is an open-source general-purpose web search agent framework that can decompose complex problems and progressively search for information on the internet until it answers user questions. By providing the Web24 dataset, covering five major fields: finance, games, sports, movies, and events, it provides a benchmark for evaluating model performance on search tasks. The framework supports zero-shot and few-shot learning, providing an important reference for the application of large language models in the field of Chinese web search agents.

AI search

51.9K

English Picks

Signs

Signs, powered by NVIDIA, is an innovative platform designed to help users learn American Sign Language (ASL) through artificial intelligence. It allows users to contribute data by recording sign language videos, with the aim of building the world's largest open sign language dataset. The platform utilizes AI-driven real-time feedback and 3D animation to provide a welcoming learning experience for beginners, while simultaneously offering data support to the sign language community, fostering the spread and diversity of sign language learning. The dataset is planned to be made publicly available in the second half of 2025, encouraging the development of more related technologies and services.

Education

67.1K

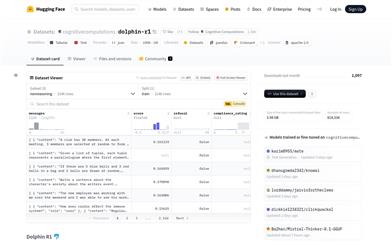

Dolphin R1

Dolphin R1 is a dataset created by the Cognitive Computations team, aimed at training reasoning models similar to the DeepSeek-R1 Distill model. The dataset comprises 300,000 reasoning samples from DeepSeek-R1, 300,000 reasoning samples from Gemini 2.0 flash thinking, and 200,000 Dolphin chat samples. This combination provides researchers and developers with abundant training resources, enhancing model reasoning and dialogue capabilities. The creation of this dataset was supported by sponsors such as Dria, Chutes, and Crusoe Cloud, who contributed computational resources and funding. The release of the Dolphin R1 dataset offers a critical foundation for research and development in the field of natural language processing, fostering the advancement of related technologies.

AI Model

55.5K

Nemotron CC

Nemotron-CC is a dataset of 6.3 trillion tokens based on Common Crawl. It integrates classifiers, rewrites synthetic data, and reduces reliance on heuristic filters to convert English Common Crawl into a long-term pre-training dataset with 6.3 trillion tokens, 4.4 trillion of which are globally de-duplicated raw tokens, and 1.9 trillion are synthetically generated tokens. This dataset strikes a better balance between accuracy and data volume, making it significant for training large language models.

AI Model

50.0K

AGIBOT WORLD

AGIBOT WORLD is a large-scale robotics learning dataset specifically designed to advance multi-purpose robotic strategies. It includes foundational models, benchmarks, and an ecosystem aimed at providing high-quality robotic data to both academia and industry, paving the way for embodied AI. The dataset encompasses over a million trajectories from more than 100 robots, covering over 100 real-world scenarios, addressing tasks like fine manipulation, tool use, and multi-robot collaboration. It employs state-of-the-art multimodal hardware, including visual-tactile sensors, durable 6-degree-of-freedom dexterous hands, and mobile dual-arm robots, supporting research in imitation learning, multi-agent collaboration, and more. AGIBOT WORLD's goal is to transform large-scale robotics learning and promote the production of scalable robotic systems. It is an open-source platform that invites researchers and practitioners to collaboratively shape the future of embodied AI.

AI Model

45.0K

Rapbank

RapBank is a dataset focused on rap music, collecting a large number of rap songs from YouTube and offering a meticulously designed data preprocessing workflow. This dataset is significant for the field of music generation as it provides a wealth of rap music content that can be used for training and testing music generation models. The RapBank dataset includes 94,164 song links, successfully downloaded 92,371 songs, totaling 5,586 hours of music, covering 84 different languages, with English songs accounting for the majority, approximately two-thirds of the total duration.

Music Generation

48.3K

RLVR GSM MATH IF Mixed Constraints

The RLVR-GSM-MATH-IF-Mixed-Constraints dataset focuses on math problems, containing various types of math questions and corresponding answers for training and validating reinforcement learning models. Its significance lies in helping develop smarter educational tools that enhance students' abilities to solve math problems. The product background information indicates that this dataset was released by Allenai on the Hugging Face platform, containing the GSM8k and MATH subsets, as well as IF Prompts with verifiable constraints, licensed under MIT License and ODC-BY license.

Education

47.5K

Mammoth VL

MAmmoTH-VL is a large-scale multimodal reasoning platform that significantly enhances the performance of multimodal large language models (MLLMs) on various multimodal tasks through instruction tuning techniques. The platform has created a dataset consisting of 12 million instruction-response pairs using open models, covering a wide range of reasoning-intensive tasks and providing detailed and accurate reasoning steps. MAmmoTH-VL has achieved state-of-the-art performance on benchmarks such as MathVerse, MMMU-Pro, and MuirBench, showcasing its importance in education and research.

AI Model

46.6K

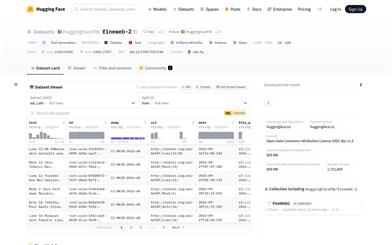

Fineweb2

FineWeb2 is a large-scale multilingual pretrained dataset provided by Hugging Face, covering over 1,000 languages. This dataset is meticulously designed to support the pretraining and fine-tuning of natural language processing (NLP) models, especially across various languages. It is renowned for its high quality, large scale, and diversity, enabling models to learn universal features across languages and improve performance on specific language tasks. FineWeb2 excels among multilingual pretrained datasets, often outperforming certain databases designed specifically for a single language.

AI Model

47.7K

Olmo 2 1124 13B Preference Mixture

The OLMo 2 1124 13B Preference Mixture is a large multilingual dataset provided by Hugging Face, containing 377.7k generated pairs, aimed at training and optimizing language models, particularly in preference learning and instruction following. Its significance lies in providing a diverse and large-scale data environment that aids in the development of more accurate and personalized language processing technologies.

AI Model

45.3K

Dolmino Mix 1124

The DOLMino dataset mix for OLMo2 stage 2 annealing training is a compilation of various high-quality data sources, designed for the second phase of training the OLMo2 model. This dataset encompasses diverse types of data such as web pages, STEM papers, and encyclopedic entries, aimed at enhancing model performance in text generation tasks. Its significance lies in providing rich training resources for the development of smarter and more accurate NLP models.

Model training and deployment

49.4K

Workflowllm

WorkflowLLM is a data-centric framework designed to enhance the orchestration capabilities of large language models (LLMs). At its core is WorkflowBench, a large-scale supervised fine-tuning dataset containing 106,763 samples from 1,503 APIs across 83 applications and 28 categories. WorkflowLLM fine-tunes the Llama-3.1-8B model to create the WorkflowLlama model optimized specifically for workflow orchestration tasks. Experimental results indicate that WorkflowLlama excels in orchestrating complex workflows and generalizes well to unseen APIs.

Workflow Orchestration

47.2K

Gamegen O

GameGen-O is the first diffusion transformation model customized for generating open-world video games. By simulating various features of game engines, such as innovative characters, dynamic environments, complex actions, and diverse events, it enables high-quality, open-domain generation. Additionally, it offers interactive controllability, which allows for gameplay simulation. The development of GameGen-O involved extensive data collection and processing from the ground up, including the construction of the first open-world video game dataset (OGameData) and efficient sorting, scoring, filtering, and decoupling of titles through a proprietary data pipeline. This robust and comprehensive OGameData serves as the foundation for the model training process.

AI Game Creation

74.8K

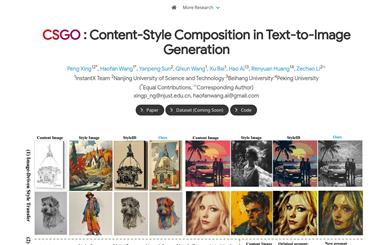

CSGO

CSGO is a text-to-image generation model based on content style synthesis. It generates and automatically cleans stylized data triplets through a data-building pipeline and has constructed the first large-scale style transfer dataset, IMAGStyle, consisting of 210,000 image triplets. The CSGO model employs end-to-end training and clearly decouples content and style features through independent feature injection. It supports image-driven style transfer, text-driven style synthesis, and text-editing-driven style synthesis, offering benefits such as inference without the need for fine-tuning, retaining the generative capabilities of the original text-to-image models, and unifying style transfer and style synthesis.

AI image generation

63.5K

Medtrinity 25M

MedTrinity-25M is a large-scale multimodal dataset featuring multi-granular medical annotations. Developed by multiple authors, it aims to advance research in medical image and text processing. The dataset's construction involves steps such as data extraction and multi-granular text description generation, supporting various medical image analysis tasks, such as visual question answering (VQA) and pathology image analysis.

AI medical health

86.1K

Data Juicer

Data-Juicer is a comprehensive multimodal data processing system aimed at delivering higher quality, richer, and more digestible data for large language models (LLMs). It offers a systematic and reusable data processing library, supports collaborative development between data and models, allows rapid iteration through a sandbox lab, and provides features like data and model feedback loops, visualization, and multidimensional automated evaluation, helping users better understand and improve their data and models. Data-Juicer is actively maintained and regularly enhanced with more features, data recipes, and datasets.

AI Data Mining

62.4K

Fresh Picks

MINT 1T

MINT-1T is a multimodal dataset open-sourced by Salesforce AI, containing one trillion text tokens and 3.4 billion images, making it ten times larger than existing open-source datasets. It includes not only HTML documents but also PDF documents and ArXiv papers, enriching the dataset's diversity. The construction of MINT-1T involves multiple data collection, processing, and filtering steps to ensure high quality and diversity of the data.

Model Training and Deployment

57.7K

SA V Dataset

The SA-V Dataset is an open-world video dataset specifically designed for training general object segmentation models, containing 51,000 diverse videos and 643,000 spatio-temporal segmentation masks (masklets). This dataset is intended for computer vision research and is available under a CC BY 4.0 license. The video content covers a wide variety of themes, including locations, objects, and scenes, with masks ranging from large-scale objects like buildings to intricate details like indoor decorations.

AI image detection and recognition

71.8K

Fresh Picks

Segment Anything Model 2

Segment Anything Model 2 (SAM 2) is a visual segmentation model launched by Meta's AI research division, FAIR. It achieves real-time video processing through a simple transformer architecture and streaming memory design. The model builds a loop data engine through user interaction, gathering the largest video segmentation dataset to date, SA-V. SAM 2 is trained on this dataset, delivering outstanding performance across a wide range of tasks and visual domains.

AI image detection and recognition

54.6K

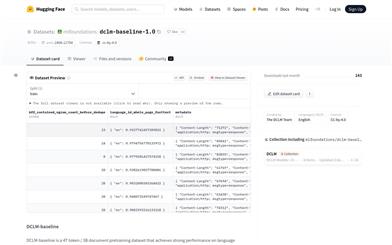

DCLM Baseline

DCLM-baseline is a pretraining dataset for language model benchmarking, containing 4T tokens and 3B documents. It is curated from the Common Crawl dataset after a careful planning of data cleaning, filtering, and deduplication steps, aiming to demonstrate the importance of data curation in training efficient language models. The dataset is only for research purposes and should not be used in production environments or for training domain-specific models, such as those for code and mathematics.

AI Model

51.6K

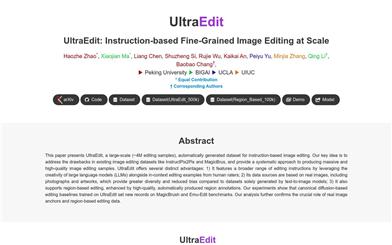

Ultraedit

UltraEdit is a large-scale image editing dataset comprising approximately 4 million automatically generated, instruction-based image editing samples. It leverages the creativity of large language models (LLMs) and the contextual editing examples provided by human evaluators, offering a systematic approach to produce large-scale and high-quality image editing samples. Key advantages of UltraEdit include:

1) **Wider Range of Editing Instructions:** It utilizes the creativity of LLMs and contextual editing examples from human evaluators to provide a broader spectrum of editing instructions.

2) **Diverse Data Source:** Its data source is based on real-world images, encompassing photographs and artwork, leading to increased diversity and reduced bias.

3) **Region-Based Editing Support:** Enhanced by high-quality, automatically generated region annotations, it supports region-based editing.

AI image editing

59.9K

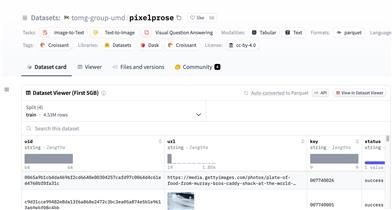

Pixelprose

PixelProse, created by the tomg-group-umd, is a large-scale dataset generating over 16 million detailed image descriptions using the advanced vision-language model Gemini 1.0 Pro Vision. This dataset is crucial for developing and improving image-to-text conversion technologies and can be used for tasks like image captioning and visual question answering.

AI image detection and recognition

54.9K

Emo Visual Data

emo-visual-data is a publicly available emoji visual annotation dataset. It collects 5329 emojis through visual annotation completed using the glm-4v and step-free-api projects. This dataset can be used to train and test multimodal large models and is crucial for understanding the relationship between image content and textual descriptions.

AI image detection and recognition

52.4K

Ultramedical

The UltraMedical project aims to develop specialized general-purpose models for the biomedical field. These models are designed to answer questions related to exams, clinical scenarios, and research questions while maintaining a broad base of general knowledge to effectively handle cross-domain issues. By utilizing advanced alignment techniques, including supervised fine-tuning (SFT), direct preference optimization (DPO), and odds ratio preference optimization (ORPO), training large language models on the UltraMedical dataset creates powerful and versatile models that effectively serve the needs of the biomedical community.

AI medical health

48.3K

Flashrag

FlashRAG is a Python toolkit designed for replicating and developing research in retrieval-augmented generation (RAG). It includes 32 pre-processed benchmark RAG datasets and 12 state-of-the-art RAG algorithms. FlashRAG offers a comprehensive and customizable framework, encompassing essential components for RAG scenarios such as retriever, reranker, generator, and compressor, enabling flexible assembly of complex pipelines. Moreover, FlashRAG provides efficient preprocessing stages and optimized execution, supporting tools like vLLM and FastChat to accelerate LLM inference and vector index management.

AI Development Assistant

63.8K

Fresh Picks

Imageinwords

ImageInWords (IIW) is a human-in-the-loop annotation framework that involves planning highly detailed image descriptions and generating a new dataset. This dataset achieves state-of-the-art results by evaluating automation and human parallel (SxS) metrics. The IIW dataset significantly improves in several dimensions while generating descriptions compared to previous datasets and the outputs of GPT-4V, including readability, comprehensiveness, specificity, imagination, and human similarity. Furthermore, models fine-tuned with the IIW dataset excel in text-to-image generation and visual language reasoning tasks, producing descriptions that are closer to the original images.

AI image detection and recognition

55.5K

English Picks

Wildchat

The WildChat dataset is a corpus consisting of one million real-world user interactions with ChatGPT, characterized by diverse language and user prompts. This dataset is used to fine-tune Meta's Llama-2 and create the WildLlama-7b-user-assistant chatbot, capable of predicting user prompts and assistant responses.

AI Model

65.4K

Fineweb

The FineWeb dataset contains over 150 billion web pages of cleaned and deduplicated English text sourced from CommonCrawl. Designed specifically for pre-training large language models, it aims to advance the development of open-source models. The dataset has been meticulously processed and filtered to ensure high quality, making it suitable for a variety of natural language processing tasks.

AI Data Mining

62.7K

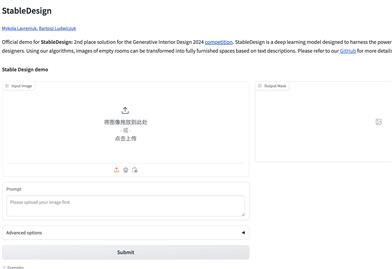

Stabledesign

The StableDesign project aims to provide a dataset and training methods for generative interior design. Users upload empty room images and text prompts to generate interior design images. Through data download from Airbnb, feature extraction and ControlNet model training, combined with image and natural language processing techniques, it offers new ideas and approaches.

AI indoor design

61.0K

MNBVC

MNBVC (Massive Never-ending BT Vast Chinese corpus) is a project aimed at providing rich Chinese data for AI. It includes not only mainstream cultural content but also niche cultures and internet slang. The dataset encompasses various forms of pure text Chinese data, such as news, essays, novels, books, magazines, papers, dialogues, posts, wikis, ancient poems, lyrics, product descriptions, jokes, anecdotes, and chat logs.

AI Data Mining

118.1K

- 1

- 2

Featured AI Tools

Flow AI

Flow is an AI-driven movie-making tool designed for creators, utilizing Google DeepMind's advanced models to allow users to easily create excellent movie clips, scenes, and stories. The tool provides a seamless creative experience, supporting user-defined assets or generating content within Flow. In terms of pricing, the Google AI Pro and Google AI Ultra plans offer different functionalities suitable for various user needs.

Video Production

43.1K

Nocode

NoCode is a platform that requires no programming experience, allowing users to quickly generate applications by describing their ideas in natural language, aiming to lower development barriers so more people can realize their ideas. The platform provides real-time previews and one-click deployment features, making it very suitable for non-technical users to turn their ideas into reality.

Development Platform

44.7K

Listenhub

ListenHub is a lightweight AI podcast generation tool that supports both Chinese and English. Based on cutting-edge AI technology, it can quickly generate podcast content of interest to users. Its main advantages include natural dialogue and ultra-realistic voice effects, allowing users to enjoy high-quality auditory experiences anytime and anywhere. ListenHub not only improves the speed of content generation but also offers compatibility with mobile devices, making it convenient for users to use in different settings. The product is positioned as an efficient information acquisition tool, suitable for the needs of a wide range of listeners.

AI

42.5K

Minimax Agent

MiniMax Agent is an intelligent AI companion that adopts the latest multimodal technology. The MCP multi-agent collaboration enables AI teams to efficiently solve complex problems. It provides features such as instant answers, visual analysis, and voice interaction, which can increase productivity by 10 times.

Multimodal technology

43.3K

Chinese Picks

Tencent Hunyuan Image 2.0

Tencent Hunyuan Image 2.0 is Tencent's latest released AI image generation model, significantly improving generation speed and image quality. With a super-high compression ratio codec and new diffusion architecture, image generation speed can reach milliseconds, avoiding the waiting time of traditional generation. At the same time, the model improves the realism and detail representation of images through the combination of reinforcement learning algorithms and human aesthetic knowledge, suitable for professional users such as designers and creators.

Image Generation

42.5K

Openmemory MCP

OpenMemory is an open-source personal memory layer that provides private, portable memory management for large language models (LLMs). It ensures users have full control over their data, maintaining its security when building AI applications. This project supports Docker, Python, and Node.js, making it suitable for developers seeking personalized AI experiences. OpenMemory is particularly suited for users who wish to use AI without revealing personal information.

open source

42.8K

Fastvlm

FastVLM is an efficient visual encoding model designed specifically for visual language models. It uses the innovative FastViTHD hybrid visual encoder to reduce the time required for encoding high-resolution images and the number of output tokens, resulting in excellent performance in both speed and accuracy. FastVLM is primarily positioned to provide developers with powerful visual language processing capabilities, applicable to various scenarios, particularly performing excellently on mobile devices that require rapid response.

Image Processing

41.7K

Chinese Picks

Liblibai

LiblibAI is a leading Chinese AI creative platform offering powerful AI creative tools to help creators bring their imagination to life. The platform provides a vast library of free AI creative models, allowing users to search and utilize these models for image, text, and audio creations. Users can also train their own AI models on the platform. Focused on the diverse needs of creators, LiblibAI is committed to creating inclusive conditions and serving the creative industry, ensuring that everyone can enjoy the joy of creation.

AI Model

6.9M