# Video Understanding

Videorag

VideoRAG is an innovative retrieval-augmented generation framework specifically developed for understanding and processing videos with very long contexts. It intelligently combines graph-driven textual knowledge anchoring with hierarchical multimodal context encoding, enabling comprehension of videos of unrestricted lengths. The framework dynamically builds knowledge graphs, maintains semantic coherence across multiple video contexts, and enhances retrieval efficiency through adaptive multimodal fusion mechanisms. Key advantages of VideoRAG include efficient processing of long-context videos, structured video knowledge indexing, and multimodal retrieval capabilities, allowing it to provide comprehensive answers to complex queries. This framework holds significant technical value and application prospects in the field of long video understanding.

Video Editing

53.8K

Chinese Picks

Qwen2.5 VL

Qwen2.5-VL is the latest flagship visual language model released by the Qwen team, representing a significant advancement in the field of visual language models. It can not only recognize common objects but also analyze complex content in images, such as text, charts, and icons, and supports understanding of long videos and event localization. The model performs exceptionally well in various benchmark tests, particularly excelling in document understanding and visual agent tasks, showcasing strong visual comprehension and reasoning abilities. Its main advantages include efficient multimodal understanding, powerful long video processing capabilities, and flexible tool invocation features, making it suitable for a variety of application scenarios.

AI Model

94.4K

Tarsier

Tarsier is a series of large-scale video language models developed by the ByteDance research team, designed to generate high-quality video descriptions and equipped with robust video comprehension capabilities. The model significantly enhances the accuracy and detail of video descriptions through a two-stage training strategy (multi-task pre-training and multi-granularity instruction fine-tuning). Its main advantages include high precision in video description, understanding of complex video content, and achieving state-of-the-art (SOTA) results in multiple video comprehension benchmark tests. The model's development addresses the shortcomings in detail and accuracy of existing video language models, achieving new heights in video description through extensive training on high-quality data and innovative training methods. Currently, the model is not explicitly priced and is mainly targeted at academic research and commercial applications, suitable for scenarios requiring high-quality understanding and generation of video content.

Video Production

79.2K

Videollama3

VideoLLaMA3, developed by the DAMO-NLP-SG team, is a state-of-the-art multimodal foundational model specializing in image and video understanding. Based on the Qwen2.5 architecture, it integrates advanced visual encoders (such as SigLip) with powerful language generation capabilities to address complex visual and language tasks. Key advantages include efficient spatiotemporal modeling, strong multimodal fusion capabilities, and optimized training on large-scale datasets. This model is suitable for applications requiring deep video understanding, such as video content analysis and visual question answering, demonstrating significant potential for both research and commercial use.

Video Production

57.1K

Omagent.com

OmAgent is a multimodal native agent framework used for smart devices and similar applications. It employs a divide-and-conquer algorithm to efficiently solve complex tasks, capable of preprocessing long videos and answering questions with human-like precision. Additionally, it can provide personalized clothing suggestions based on user requests and optional weather conditions. Currently, the official website does not specify pricing, but the features are primarily targeted towards users who require efficient task processing and intelligent interactions, such as developers and businesses.

Smart Body

46.9K

Videoprompt.org

videoprompt.org is a website dedicated to AI video generation prompts, offering a range of instructions for generating, editing, or understanding video content. Through a carefully curated collection of high-quality prompts, a community-driven approach, and a focus on practical applications, it helps users unlock the full potential of AI models in video processing, improving workflow efficiency and achieving consistently high-quality results.

Video Production

58.0K

Apollo LMMs

Apollo is an advanced family of large multimodal models focused on video understanding. It systematically explores the design space of video-LMMs, revealing the key factors driving performance and providing practical insights for optimizing model efficacy. By uncovering 'Scaling Consistency', Apollo enables design decisions made on smaller models and datasets to be reliably transferred to larger models, significantly reducing computational costs. The main advantages of Apollo include efficient design decisions, optimized training schedules, and data mixing, along with a novel benchmarking tool, ApolloBench, for effective evaluation.

Video Production

49.7K

Qwen2 VL 7B

Qwen2-VL-7B is the latest iteration of the Qwen-VL model, representing a year of innovative advancements. It achieves state-of-the-art performance on visual understanding benchmarks, including MathVista, DocVQA, RealWorldQA, MTVQA, among others. The model can comprehend videos over 20 minutes long, providing high-quality support for video-based question answering, dialogue, and content creation. Additionally, Qwen2-VL supports multiple languages, including English, Chinese, and most European languages, as well as Japanese, Korean, Arabic, Vietnamese, and more. Updates to the model architecture include Naive Dynamic Resolution and Multimodal Rotary Position Embedding (M-ROPE), enhancing its multimodal processing capabilities.

AI Model

46.9K

Qwen2 VL 2B

Qwen2-VL-2B is the latest iteration of the Qwen-VL model, representing nearly a year's worth of innovations. The model has achieved state-of-the-art performance on visual understanding benchmarks including MathVista, DocVQA, RealWorldQA, and MTVQA. It can comprehend over 20-minute videos, providing high-quality support for video-based question answering, dialogue, and content creation. Qwen2-VL also supports multiple languages, including most European languages, Japanese, Korean, Arabic, Vietnamese, in addition to English and Chinese. Model architecture updates include Naive Dynamic Resolution and Multimodal Rotary Position Embedding (M-ROPE), which enhance its multimodal processing capabilities.

AI Model

48.6K

Ppllava

PPLLaVA is an efficient large-scale video language model that combines fine-grained visual prompt alignment, a convolutional-style pooling mechanism for visual token compression based on user instructions, and CLIP context extension. This model has achieved new state-of-the-art results on datasets such as VideoMME, MVBench, VideoChatGPT Bench, and VideoQA Bench, using only 1024 visual tokens, achieving an 8-fold improvement in throughput.

Video Production

46.4K

Longvu

LongVU is an innovative long video language understanding model that reduces the number of video annotations through a spatiotemporal adaptive compression mechanism while preserving visual details in lengthy videos. The importance of this technology lies in its ability to handle a large number of video frames while losing only a minimal amount of visual information within a limited context length, significantly enhancing long video content understanding and analysis capabilities. LongVU surpasses existing methods in various video understanding benchmark tests, particularly for tasks involving videos up to one hour long. Furthermore, LongVU can effectively scale down to smaller model sizes while maintaining state-of-the-art video understanding performance.

Model Training and Deployment

48.0K

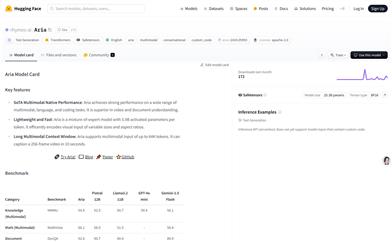

Aria

Aria is a multimodal native mixture of experts model that excels in multimodal, language, and coding tasks. It performs exceptionally well in video and document understanding, supporting up to 64K multimodal input, with the ability to describe a 256-frame video in just 10 seconds. The model has 25.3 billion parameters and can be loaded on a single A100 (80GB) GPU using bfloat16 precision. Aria was developed to meet the needs for multimodal data understanding, particularly in video and document processing. It is an open-source model aimed at advancing multimodal artificial intelligence.

AI Model

51.9K

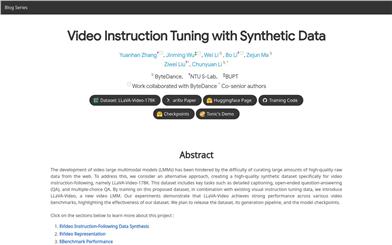

Llava Video

LLaVA-Video is a large multimodal model (LMM) focused on video instruction tuning, addressing the challenge of acquiring high-quality raw data from the internet by creating a high-quality synthetic dataset, LLaVA-Video-178K. This dataset includes detailed video descriptions, open-ended questions, and multiple-choice questions, aimed at enhancing the understanding and reasoning capabilities of video language models. The LLaVA-Video model has demonstrated outstanding performance across various video benchmarks, validating the effectiveness of its dataset.

AI Model

56.3K

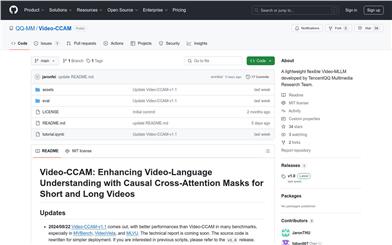

Video CCAM

Video-CCAM is a series of flexible video multilingual models (Video-MLLM) developed by the Tencent QQ Multimedia Research Team, aimed at enhancing video-language understanding, particularly suitable for both short and long video analysis. It achieves this through Causal Cross-Attention Masks. Video-CCAM has shown outstanding performance across multiple benchmark tests, especially in MVBench, VideoVista, and MLVU. The source code has been rewritten to streamline the deployment process.

AI video generation

50.8K

Fresh Picks

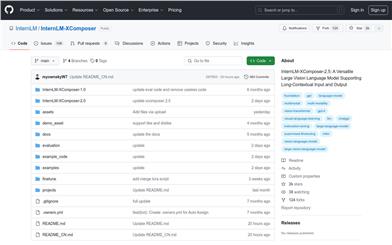

Internlm XComposer 2.5

InternLM-XComposer-2.5 is a multifunctional large visual language model that supports long context input and output. It excels in various text-image understanding and generation applications, achieving performance comparable to GPT-4V while utilizing only 7B parameters for its LLM backend. Trained on 24K interleaved image-text context, the model seamlessly scales to 96K long context through RoPE extrapolation. This long context capability makes it particularly adept at tasks requiring extensive input and output context. Furthermore, it supports ultra-high resolution understanding, fine-grained video understanding, multi-turn multi-image dialogue, web page creation, and writing high-quality text-image articles.

AI Model

73.7K

Sharegpt4video

The ShareGPT4Video series aims to promote video understanding in large video-language models (LVLMs) and video generation in text-to-video models (T2VMs) through dense and precise captions. The series includes:

1) ShareGPT4Video, a dense video caption dataset of 40K GPT4V annotations, developed through carefully designed data filtering and annotation strategies.

2) ShareCaptioner-Video, an efficient and powerful video captioning model for any video, trained on its 4.8M high-quality aesthetic video dataset.

3) ShareGPT4Video-8B, a simple yet excellent LVLM that achieved top performance on three advanced video benchmark tests.

AI video generation

74.0K

Videollama2 7B

Developed by the DAMO-NLP-SG team, VideoLLaMA2-7B is a multimodal large language model focused on video content understanding and generation. This model demonstrates significant performance in video question answering and video captioning, capable of handling complex video content and generating accurate and natural language descriptions. It has been optimized for spatio-temporal modeling and audio understanding, providing powerful support for intelligent analysis and processing of video content.

AI video generation

72.3K

Fresh Picks

Lvbench

LVBench is a benchmark specifically designed for long video understanding, aimed at advancing the ability of multimodal large language models to comprehend videos spanning several hours. This is crucial for real-world applications such as long-term decision-making, in-depth film reviews and discussions, and live sports commentary.

AI Model

47.7K

Videollama 2

VideoLLaMA 2 is a large language model optimized for video understanding tasks. It leverages advanced spatio-temporal modeling and audio understanding capabilities to enhance the parsing and comprehension of video content. The model demonstrates exceptional performance in tasks such as multiple-choice video question answering and video captioning.

AI video understanding

82.0K

VILA

VILA is a pre-trained visual language model (VLM) that achieves video and multi-image understanding capabilities through pre-training with large-scale interleaved image-text data. VILA can be deployed on edge devices using the AWQ 4bit quantization and TinyChat framework. Key advantages include: 1) Interleaved image-text data is crucial for performance enhancement; 2) Not freezing the large language model (LLM) during interleaved image-text pre-training promotes context learning; 3) Re-mixing text instruction data is critical for boosting VLM and plain text performance; 4) Token compression can expand the number of video frames. VILA demonstrates captivating capabilities including video inference, context learning, visual reasoning chains, and better world knowledge.

AI Model

84.7K

Video Mamba Suite

The Video Mamba Suite is a new state-space model suite for video understanding designed to explore and assess the potential of Mamba in video modeling. It contains 14 models/modules, covering 12 video understanding tasks, demonstrating efficient performance and superiority in both video and video-language tasks.

AI Video Generation

67.3K

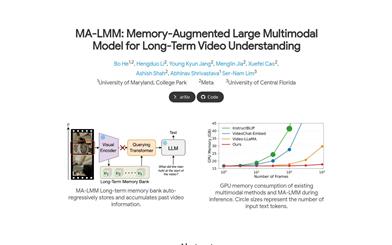

MA LMM

MA-LMM is a large-scale multimodal model based on a large language model, primarily designed for long-term video understanding. It employs an online video processing approach and utilizes a memory store to retain past video information. This enables it to conduct long-term analysis of video content without exceeding the limitations of language model context length or GPU memory. MA-LMM can seamlessly integrate with existing multimodal language models and has achieved state-of-the-art performance in tasks such as long video understanding, video question answering, and video captioning.

AI video generation

74.2K

Minigpt4 Video

MiniGPT4-Video is a multimodal large model designed for video understanding. It can process temporal visual data and text data, generate captions and slogans, and is suitable for video question answering. Based on MiniGPT-v2, it incorporates the visual backbone EVA-CLIP and undergoes multi-stage training, including large-scale video-text pre-training and video question-answering fine-tuning. It achieves significant improvements on benchmarks such as MSVD, MSRVTT, TGIF, and TVQA. The pricing is currently unknown.

AI Video Generation

97.7K

Videoprism

VideoPrism is a general-purpose video coding model that achieves leading performance across various video understanding tasks, including classification, localization, retrieval, subtitle generation, and Q&A. Its innovation lies in the very large and diverse pre-training dataset, which contains 36 million high-quality video-text pairs and 582 million video clips with noisy text. The pre-training uses a two-phase strategy: initially, it employs contrastive learning to match videos with text, followed by predicting masked video blocks to fully utilize different supervisory signals. A fixed VideoPrism model can be directly adapted to downstream tasks and has refreshed state-of-the-art scores on 30 video understanding benchmarks.

AI video generation

86.7K

Featured AI Tools

Flow AI

Flow is an AI-driven movie-making tool designed for creators, utilizing Google DeepMind's advanced models to allow users to easily create excellent movie clips, scenes, and stories. The tool provides a seamless creative experience, supporting user-defined assets or generating content within Flow. In terms of pricing, the Google AI Pro and Google AI Ultra plans offer different functionalities suitable for various user needs.

Video Production

45.0K

Nocode

NoCode is a platform that requires no programming experience, allowing users to quickly generate applications by describing their ideas in natural language, aiming to lower development barriers so more people can realize their ideas. The platform provides real-time previews and one-click deployment features, making it very suitable for non-technical users to turn their ideas into reality.

Development Platform

48.6K

Listenhub

ListenHub is a lightweight AI podcast generation tool that supports both Chinese and English. Based on cutting-edge AI technology, it can quickly generate podcast content of interest to users. Its main advantages include natural dialogue and ultra-realistic voice effects, allowing users to enjoy high-quality auditory experiences anytime and anywhere. ListenHub not only improves the speed of content generation but also offers compatibility with mobile devices, making it convenient for users to use in different settings. The product is positioned as an efficient information acquisition tool, suitable for the needs of a wide range of listeners.

AI

45.5K

Minimax Agent

MiniMax Agent is an intelligent AI companion that adopts the latest multimodal technology. The MCP multi-agent collaboration enables AI teams to efficiently solve complex problems. It provides features such as instant answers, visual analysis, and voice interaction, which can increase productivity by 10 times.

Multimodal technology

48.3K

Chinese Picks

Tencent Hunyuan Image 2.0

Tencent Hunyuan Image 2.0 is Tencent's latest released AI image generation model, significantly improving generation speed and image quality. With a super-high compression ratio codec and new diffusion architecture, image generation speed can reach milliseconds, avoiding the waiting time of traditional generation. At the same time, the model improves the realism and detail representation of images through the combination of reinforcement learning algorithms and human aesthetic knowledge, suitable for professional users such as designers and creators.

Image Generation

48.0K

Openmemory MCP

OpenMemory is an open-source personal memory layer that provides private, portable memory management for large language models (LLMs). It ensures users have full control over their data, maintaining its security when building AI applications. This project supports Docker, Python, and Node.js, making it suitable for developers seeking personalized AI experiences. OpenMemory is particularly suited for users who wish to use AI without revealing personal information.

open source

45.8K

Fastvlm

FastVLM is an efficient visual encoding model designed specifically for visual language models. It uses the innovative FastViTHD hybrid visual encoder to reduce the time required for encoding high-resolution images and the number of output tokens, resulting in excellent performance in both speed and accuracy. FastVLM is primarily positioned to provide developers with powerful visual language processing capabilities, applicable to various scenarios, particularly performing excellently on mobile devices that require rapid response.

Image Processing

42.5K

Chinese Picks

Liblibai

LiblibAI is a leading Chinese AI creative platform offering powerful AI creative tools to help creators bring their imagination to life. The platform provides a vast library of free AI creative models, allowing users to search and utilize these models for image, text, and audio creations. Users can also train their own AI models on the platform. Focused on the diverse needs of creators, LiblibAI is committed to creating inclusive conditions and serving the creative industry, ensuring that everyone can enjoy the joy of creation.

AI Model

6.9M