# Image-to-Video

Viddo

Viddo AI is an AI-powered video generator that converts text or images into stunning videos. It features multiple functions including text-to-video, image-to-video, and more, making it a must-have tool for endless creativity.

Text-to-Video

38.1K

Fresh Picks

Vidu Q1

Vidu Q1, launched by Shengshu Technology, is a domestically produced video generation large language model designed for video creators. It supports high-definition 1080p video generation and features cinematic camera movements and start/end frame functionality. This product ranked first in the VBench-1.0 and VBench-2.0 evaluations, offering exceptional value for money at only one-tenth the price of competitors. It is suitable for film, advertising, animation, and other fields, significantly reducing production costs and improving creative efficiency.

Video Production

38.6K

Wan 2.1 AI

Wan 2.1 AI is an open-source large-scale video generation AI model developed by Alibaba. It supports text-to-video (T2V) and image-to-video (I2V) generation, capable of transforming simple input into high-quality video content. This model is significant in the field of video generation, greatly simplifying the video creation process, lowering the creation threshold, improving creation efficiency, and providing users with a wide variety of video creation possibilities. Its main advantages include high-quality video generation effects, smooth presentation of complex actions, realistic physical simulation, and rich artistic styles. Currently, this product is fully open-source, and users can use its basic functions for free. It is highly practical for individuals and businesses that have video creation needs but lack professional skills or equipment.

Video Production

68.4K

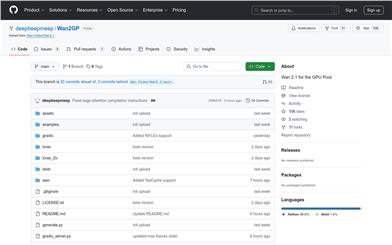

Wan2gp

Wan2GP is an improved version based on Wan2.1, aiming to provide an efficient and low-memory video generation solution for low-configuration GPU users. The model, through optimized memory management and accelerated algorithms, enables ordinary users to quickly generate high-quality video content on consumer-grade GPUs. It supports multiple tasks, including text-to-video, image-to-video, and video editing, and features a powerful video VAE architecture capable of efficiently handling 1080P videos. The emergence of Wan2GP lowers the barrier to entry for video generation technology, allowing more users to easily learn and apply it to real-world scenarios.

Video Production

74.2K

Wan2.1 T2V 14B

Wan2.1-T2V-14B is an advanced text-to-video generation model based on a diffusion transformer architecture, incorporating innovative spatiotemporal variational autoencoders (VAEs) and large-scale data training. It generates high-quality video content at various resolutions, supports both Chinese and English text input, and surpasses existing open-source and commercial models in performance and efficiency. This model is suitable for scenarios requiring efficient video generation, such as content creation, advertising production, and video editing. Currently, this model is freely available on the Hugging Face platform to promote the development and application of video generation technology.

Video Production

146.6K

Allegro TI2V

Allegro-TI2V is a text-to-image-to-video generation model that creates video content based on user-provided prompts and images. The model is recognized for its open-source nature, diverse content creation capabilities, high-quality outputs, compact efficient model parameters, and support for various precision and GPU memory optimizations. It represents cutting-edge advancements in AI technology for video generation, holding significant technical value and commercial application potential. The Allegro-TI2V model is available on the Hugging Face platform under the Apache 2.0 open-source license, allowing users to download and use it for free.

Video Production

65.4K

Dream Machine API

Dream Machine API is a creative intelligence platform that offers a range of advanced video generation models. With intuitive APIs and open-source SDKs, users can build and expand creative AI products. The platform features capabilities such as text-to-video, image-to-video, keyframe control, expansion, looping, and camera control, designed to help users collaborate with creative intelligence to create better content. The launch of Dream Machine API aims to enrich visual exploration and creativity, allowing more ideas to be tested, better narratives to be constructed, and enabling diverse stories to be told by those who previously found it difficult.

AI video generation

58.8K

Vidful.ai

Vidful.ai is an AI-powered online video generator that utilizes advanced algorithms to quickly convert text and images into high-quality video content. The product integrates technologies from Kuaishou's Kling AI and Luma AI Dream Machine, offering realistic motion effects and cinematic-level video quality, simplifying the video production process so users can create professional videos without specialized editing skills. Vidful.ai is available for free online, catering to users across various fields including marketing, education, social media creation, and e-commerce.

Video Production

152.4K

Fresh Picks

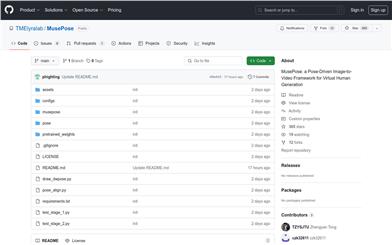

Musepose

MusePose is an image-to-video generation framework developed by Tencent Music Entertainment's Lyra Lab, designed to generate virtual character videos through pose control signals. It is the last building block of the Muse open-source series, alongside MuseV and MuseTalk, aiming to push the community towards the vision of generating virtual characters with full-body motion and interaction capabilities. Based on diffusion models and pose guidance, MusePose can generate dance videos of characters from reference images, and the results surpass the quality of almost all open-source models on the same topic.

AI video generation

91.9K

Fresh Picks

I2vedit

I2VEdit is an innovative video editing technology that extends the editing of a single frame to the entire video through a pre-trained image-to-video model. This technology can adaptively maintain the visual and motion integrity of the source video and effectively handle global editing, local editing, and moderate shape changes, which existing methods cannot achieve. The core of I2VEdit includes two main processes: rough motion extraction and appearance refinement, which are precisely adjusted through coarse-grained attention matching. In addition, a skip interval strategy is introduced to mitigate the quality degradation in the automatic regression generation process of multiple video segments. Experimental results show that I2VEdit's superior performance in fine-grained video editing demonstrates its ability to produce high-quality, temporally consistent output.

AI Video Editing

148.8K

Animatelcm SVD Xt

AnimateLCM-SVD-xt is a novel image-to-video generation model capable of producing high-quality, coherent videos with minimal steps. The model utilizes consistency knowledge distillation and stereoscopic matching learning techniques to ensure smoother and more consistent video generation, while significantly reducing computational intensity. Key features include: 1) Generation of a 576x1024 resolution video with 25 frames within 4 to 8 steps; 2) A remarkable 12.5 times reduction in computational complexity compared to a standard video diffusion model; 3) High-quality video generation without additional classifier guidance.

AI video generation

284.0K

Stable Video Diffusion 1.1 Image To Video

Stable Video Diffusion (SVD) 1.1 Image-to-Video is a diffusion model that generates videos corresponding to static images as conditioning frames. This latent diffusion model is trained to generate short video clips from images. At a resolution of 1024x576, the model is trained to generate 25-frame videos using the same-sized context frames and is fine-tuned from SVD Image-to-Video [25 frames]. During fine-tuning, conditions like 6FPS and Motion Bucket Id 127 are fixed to improve output consistency without adjusting hyperparameters.

AI video generation

397.2K

Featured AI Tools

Flow AI

Flow is an AI-driven movie-making tool designed for creators, utilizing Google DeepMind's advanced models to allow users to easily create excellent movie clips, scenes, and stories. The tool provides a seamless creative experience, supporting user-defined assets or generating content within Flow. In terms of pricing, the Google AI Pro and Google AI Ultra plans offer different functionalities suitable for various user needs.

Video Production

43.1K

Nocode

NoCode is a platform that requires no programming experience, allowing users to quickly generate applications by describing their ideas in natural language, aiming to lower development barriers so more people can realize their ideas. The platform provides real-time previews and one-click deployment features, making it very suitable for non-technical users to turn their ideas into reality.

Development Platform

44.7K

Listenhub

ListenHub is a lightweight AI podcast generation tool that supports both Chinese and English. Based on cutting-edge AI technology, it can quickly generate podcast content of interest to users. Its main advantages include natural dialogue and ultra-realistic voice effects, allowing users to enjoy high-quality auditory experiences anytime and anywhere. ListenHub not only improves the speed of content generation but also offers compatibility with mobile devices, making it convenient for users to use in different settings. The product is positioned as an efficient information acquisition tool, suitable for the needs of a wide range of listeners.

AI

42.5K

Minimax Agent

MiniMax Agent is an intelligent AI companion that adopts the latest multimodal technology. The MCP multi-agent collaboration enables AI teams to efficiently solve complex problems. It provides features such as instant answers, visual analysis, and voice interaction, which can increase productivity by 10 times.

Multimodal technology

43.3K

Chinese Picks

Tencent Hunyuan Image 2.0

Tencent Hunyuan Image 2.0 is Tencent's latest released AI image generation model, significantly improving generation speed and image quality. With a super-high compression ratio codec and new diffusion architecture, image generation speed can reach milliseconds, avoiding the waiting time of traditional generation. At the same time, the model improves the realism and detail representation of images through the combination of reinforcement learning algorithms and human aesthetic knowledge, suitable for professional users such as designers and creators.

Image Generation

42.2K

Openmemory MCP

OpenMemory is an open-source personal memory layer that provides private, portable memory management for large language models (LLMs). It ensures users have full control over their data, maintaining its security when building AI applications. This project supports Docker, Python, and Node.js, making it suitable for developers seeking personalized AI experiences. OpenMemory is particularly suited for users who wish to use AI without revealing personal information.

open source

42.8K

Fastvlm

FastVLM is an efficient visual encoding model designed specifically for visual language models. It uses the innovative FastViTHD hybrid visual encoder to reduce the time required for encoding high-resolution images and the number of output tokens, resulting in excellent performance in both speed and accuracy. FastVLM is primarily positioned to provide developers with powerful visual language processing capabilities, applicable to various scenarios, particularly performing excellently on mobile devices that require rapid response.

Image Processing

41.7K

Chinese Picks

Liblibai

LiblibAI is a leading Chinese AI creative platform offering powerful AI creative tools to help creators bring their imagination to life. The platform provides a vast library of free AI creative models, allowing users to search and utilize these models for image, text, and audio creations. Users can also train their own AI models on the platform. Focused on the diverse needs of creators, LiblibAI is committed to creating inclusive conditions and serving the creative industry, ensuring that everyone can enjoy the joy of creation.

AI Model

6.9M