# Human-Computer Interaction

Magentic UI

Magentic-UI is a research prototype of a multi-agent system that allows users to browse the web and automate tasks through a transparent and controllable interface. Its main advantage lies in enhancing human-machine interaction efficiency while providing users with control over the automation process. This product is suitable for users who need to perform complex tasks on the network and supports various operations and custom settings.

Human-Computer Interaction

39.2K

Chinese Picks

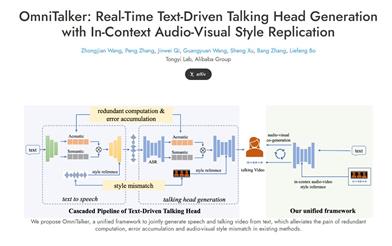

Omnitalker

OmniTalker is a unified framework proposed by Alibaba's Tongyi Lab with the aim of generating audio and video in real time to enhance human-computer interaction experiences. Its innovation lies in solving common issues in traditional text-to-speech and speech-driven video generation methods, such as out-of-sync audio-video, inconsistent styles, and system complexity. OmniTalker adopts a dual-branch diffusion transformer architecture, achieving high-fidelity audio-video outputs while maintaining efficiency. Its real-time inference speed reaches 25 frames per second, making it suitable for various interactive video chat applications and enhancing user experiences.

Video Generation

39.2K

Conversational Video Interface

Conversational Video Interface (CVI) is an emotionally intelligent conversational video interface launched by Tavus. It uses three models working together—Phoenix-3, Raven-0, and Sparrow-0—to give AI true human-like perception, listening, understanding, and real-time interaction capabilities. CVI is not just a tool, but a completely new way of human-computer communication, applicable to multiple fields such as healthcare, mental health, sales training, and customer service, with limitless usage scenarios. The technological breakthrough behind it lies in integrating the subtle emotions and rhythms of human conversation into AI interaction, making AI more than just a simple response, but something that can think, react, and change how we interact with machines.

Chatbot

63.2K

Project Mariner

Project Mariner is an early research prototype developed by Google DeepMind based on the Gemini 2.0 model, aimed at exploring future human-computer interaction methods, particularly within web browsers. This project is capable of understanding information on the browser screen, including pixels and web elements such as text, code, images, and forms, and utilizing this information to accomplish tasks. Technically, Project Mariner allows direct operations within the browser through a Chrome extension, providing users with a novel agent service experience.

AI search

53.5K

Fresh Picks

Ant Design X

Ant Design X is an AI interface solution developed by the Ant Design team, based on the RICH design paradigm (Roles, Intentions, Conversations, and Hybrid Interfaces). It continues the Ant Design design language and provides a new AGI Hybrid-UI solution. Ant Design X aims to enhance human-computer interaction efficiency and experience through AI technology, applicable across various AI scenarios including Web standalone, Web assistant, and Web embedded. Key benefits of Ant Design X include easy configuration, an exceptional universal chart library, and a strong capability to quickly comprehend and express AI intentions. Background information indicates that Ant Design X is the result of practice and iteration among a vast number of AI products within Ant Group, aimed at creating a better intelligent visual experience.

AI design tools

53.5K

Gyges Labs

Gyges Labs is committed to creating smart wearable devices for the AI era, integrating unique advanced optical technology with collaborative AI technology. The company leverages its team's expertise in micro-nano optics to develop the DigiWindow technology based on retinal projection principles, resulting in the world's smallest and lightest near-eye display module. Compared to optical solutions like Birdbath and waveguides, DigiWindow reduces dimensions from centimeters to millimeters, lowers power consumption, and provides complete optical compatibility. Additionally, building on the team's accumulated experience in collaborative AI, Gyges Labs has developed the Mirron AI engine, tailored for wearable devices based on mirror neuron principles, to enhance perception and interaction capabilities for future wearable devices, establishing a solid foundation for the upcoming 'second brain' devices.

Retinal Projection

68.2K

PARTNR

PARTNR is a large-scale benchmarking initiative released by Meta FAIR, which includes 100,000 natural language tasks aimed at studying multi-agent reasoning and planning. PARTNR utilizes large language models (LLMs) to generate tasks while minimizing errors through simulation loops. It also supports evaluations of AI agents in collaboration with real human partners, facilitated through human-in-the-loop infrastructure. PARTNR reveals significant limitations of existing LLM-based planners in task coordination, tracking, and recovery from errors, with humans solving 93% of tasks compared to just 30% for LLMs.

Research Instruments

46.9K

Agent S

Agent S is an open agent framework designed for autonomous interaction with computers through a graphical user interface (GUI). It transforms human-computer interaction by automating complex, multi-step tasks. The framework introduces an experience-enhanced hierarchical planning approach that leverages online network knowledge and narrative memory, extracting high-level experiences from past interactions to decompose complex tasks into manageable subtasks and provide step-by-step guidance using situational memory. Agent S continuously optimizes its actions and learns from experience, achieving adaptive and effective task planning. In the OSWorld benchmark, Agent S outperformed the baseline with a success rate increase of 9.37% (an 83.6% relative improvement), demonstrating extensive versatility in the WindowsAgentArena benchmark.

Smart Body

49.4K

English Picks

Computer Use

Computer Use is a new feature introduced by Anthropic's AI model Claude 3.5 Sonnet, which can simulate the way humans interact with computers by performing actions like clicking on screens and entering data. The development of this feature signifies major advancements in AI's capabilities to replicate human behaviors, unlocking a wide array of applications. The Computer Use feature has significantly enhanced aspects of safety, multimodal capabilities, and logical reasoning, representing a new frontier in AI technology. Currently in public testing, its performance stands out among similar AI models.

Smart Body

52.2K

Chinese Picks

Xincheng Lingo Voice Model

The Xincheng Lingo Voice Model is an advanced artificial intelligence voice model, focusing on providing efficient and accurate voice recognition and processing services. It understands and processes natural language, making human-computer interaction smoother and more natural. Built on the powerful AI technology of Xihu Xincheng, this model aims to deliver high-quality voice interaction experiences across various scenarios.

AI speech recognition

64.3K

LSLM

The Listening-while-Speaking Language Model (LSLM) is an AI conversational model aimed at enhancing the naturalness of human-computer interaction. Utilizing full duplex modeling (FDM) technology, it enables the ability to listen while speaking, which significantly boosts real-time interactivity, particularly when generated content lacks satisfaction, allowing for interruptions and immediate responses. LSLM employs a token-based decoder for speech generation through TTS, and a streaming self-supervised learning (SSL) encoder for real-time audio input, exploring the optimal interaction balance through three fusion strategies: early fusion, mid-fusion, and late fusion.

Chatbots

74.5K

Controlmm

ControlMM is a full-body motion generation framework equipped with plug-and-play multimodal control capabilities. It can robustly generate movements across various domains, including Text-to-Motion, Speech-to-Gesture, and Music-to-Dance. The model has significant advantages in controllability, sequence coherence, and motion realism, providing a new motion generation solution for the field of artificial intelligence.

AI Model

71.2K

Fresh Picks

V Express

V-Express is an avatar video generation model developed by the Tencent AI Lab. It balances different control signals through a series of progressive discarding operations, enabling the generated videos to consider both posture, input images, and audio. The model is particularly optimized for cases with weak audio signals, addressing the challenge of generating avatar videos with different control signal strengths.

AI head image generation

102.1K

English Picks

The Shape Of AI

The Shape of AI is a website focused on AI interaction modes, offering in-depth insights into integrating AI into design. It emphasizes the importance of user experience and explores how design can optimize human-computer interaction in an AI-driven world. The site includes a wealth of resources and tools to help designers and developers understand emerging AI modes and utilize them to enhance their products and services.

AI design tools

85.8K

English Picks

Hume AI EVI

Hume AI's Empathetic Voice Interface (EVI) is an API driven by an Empathetic Large Language Model (eLLM), capable of understanding and simulating voice tone, word stress, and more to optimize human-computer interaction. Based on over a decade of research, millions of patent data points, and more than 30 published papers in leading journals, EVI aims to provide any application with a more natural and empathetic voice interface, making interactions with AI more human-like. The technology can be widely applied in fields such as sales/conference analysis, health and wellness, AI research services, and social networking.

AI speech assistant

72.0K

EMAGE

EMAGE is a unified model for generating overall conversational gestures. It generates natural hand gestures by modeling expressive masked audio gestures. It can capture speech and rhythm information from audio input and generate corresponding body postures and hand gesture sequences. EMAGE can generate highly dynamic and expressive gestures, thereby enhancing the interactive experience of virtual characters.

AI image generation

75.1K

Insactor

InsActor is a role control system based on physical simulation. It can drive characters to complete various interactive tasks in complex environments through natural language instructions. The system utilizes conditional and adversarial diffusion models for multi-level planning, combining with a low-level controller to realize stable, robust control. It boasts smooth control and natural interaction, making it suitable for applications such as creative content generation, interactive entertainment, and human-computer interaction.

AI Agents

73.4K

Featured AI Tools

Flow AI

Flow is an AI-driven movie-making tool designed for creators, utilizing Google DeepMind's advanced models to allow users to easily create excellent movie clips, scenes, and stories. The tool provides a seamless creative experience, supporting user-defined assets or generating content within Flow. In terms of pricing, the Google AI Pro and Google AI Ultra plans offer different functionalities suitable for various user needs.

Video Production

42.8K

Nocode

NoCode is a platform that requires no programming experience, allowing users to quickly generate applications by describing their ideas in natural language, aiming to lower development barriers so more people can realize their ideas. The platform provides real-time previews and one-click deployment features, making it very suitable for non-technical users to turn their ideas into reality.

Development Platform

44.7K

Listenhub

ListenHub is a lightweight AI podcast generation tool that supports both Chinese and English. Based on cutting-edge AI technology, it can quickly generate podcast content of interest to users. Its main advantages include natural dialogue and ultra-realistic voice effects, allowing users to enjoy high-quality auditory experiences anytime and anywhere. ListenHub not only improves the speed of content generation but also offers compatibility with mobile devices, making it convenient for users to use in different settings. The product is positioned as an efficient information acquisition tool, suitable for the needs of a wide range of listeners.

AI

42.2K

Minimax Agent

MiniMax Agent is an intelligent AI companion that adopts the latest multimodal technology. The MCP multi-agent collaboration enables AI teams to efficiently solve complex problems. It provides features such as instant answers, visual analysis, and voice interaction, which can increase productivity by 10 times.

Multimodal technology

43.1K

Chinese Picks

Tencent Hunyuan Image 2.0

Tencent Hunyuan Image 2.0 is Tencent's latest released AI image generation model, significantly improving generation speed and image quality. With a super-high compression ratio codec and new diffusion architecture, image generation speed can reach milliseconds, avoiding the waiting time of traditional generation. At the same time, the model improves the realism and detail representation of images through the combination of reinforcement learning algorithms and human aesthetic knowledge, suitable for professional users such as designers and creators.

Image Generation

42.2K

Openmemory MCP

OpenMemory is an open-source personal memory layer that provides private, portable memory management for large language models (LLMs). It ensures users have full control over their data, maintaining its security when building AI applications. This project supports Docker, Python, and Node.js, making it suitable for developers seeking personalized AI experiences. OpenMemory is particularly suited for users who wish to use AI without revealing personal information.

open source

42.8K

Fastvlm

FastVLM is an efficient visual encoding model designed specifically for visual language models. It uses the innovative FastViTHD hybrid visual encoder to reduce the time required for encoding high-resolution images and the number of output tokens, resulting in excellent performance in both speed and accuracy. FastVLM is primarily positioned to provide developers with powerful visual language processing capabilities, applicable to various scenarios, particularly performing excellently on mobile devices that require rapid response.

Image Processing

41.4K

Chinese Picks

Liblibai

LiblibAI is a leading Chinese AI creative platform offering powerful AI creative tools to help creators bring their imagination to life. The platform provides a vast library of free AI creative models, allowing users to search and utilize these models for image, text, and audio creations. Users can also train their own AI models on the platform. Focused on the diverse needs of creators, LiblibAI is committed to creating inclusive conditions and serving the creative industry, ensuring that everyone can enjoy the joy of creation.

AI Model

6.9M