# Diffusion

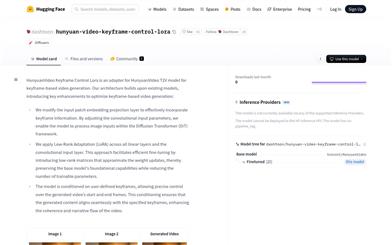

Hunyuan Video Keyframe Control Lora

HunyuanVideo Keyframe Control LoRA is an adapter for the HunyuanVideo T2V model, focusing on keyframe video generation. It modifies the input embedding layer to effectively integrate keyframe information and applies Low-Rank Adaptation (LoRA) technology to optimize linear and convolutional input layers, enabling efficient fine-tuning. This model allows users to precisely control the starting and ending frames of the generated video by defining keyframes, ensuring seamless integration with the specified keyframes and enhancing video coherence and narrative. It has significant application value in video generation, particularly excelling in scenarios requiring precise control over video content.

Video Production

63.2K

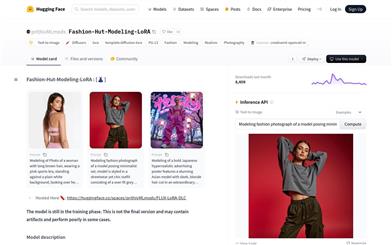

Fashion Hut Modeling LoRA

Fashion-Hut-Modeling-LoRA is a diffusion-based text-to-image generation model specifically designed to create high-quality images of fashion models. The model utilizes specific training parameters and datasets to generate fashion photography images with particular styles and details based on text prompts. It holds significant application value in fashion design and advertising, enabling designers and advertisers to swiftly generate creative concept images. The model is still in the training phase, which may result in some less-than-ideal generation outcomes, but it has already demonstrated considerable potential. The training dataset includes 14 high-resolution images and employs parameters like the AdamW optimizer and a constant learning rate scheduler, with a focus on image detail and quality during training.

Image Generation

79.8K

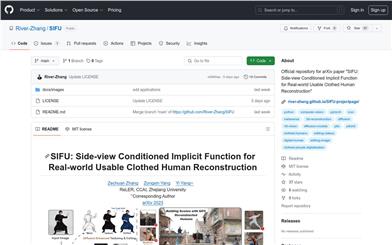

SIFU

SIFU is a method for reconstructing high-quality 3D virtual human models from lateral images. Its core innovation lies in proposing a new implicit function based on lateral images, which enhances feature extraction and improves geometric accuracy. Additionally, SIFU introduces a 3D consistent texture optimization process that significantly enhances texture quality and enables texture editing through a text-to-image diffusion model. SIFU excels in handling complex poses and loose clothing, making it an ideal solution for practical applications.

AI image generation

69.8K

Audio To Photoreal Embodiment

Audio to Photoreal Embodiment is a framework for generating full-body photorealistic avatars. It generates diverse poses and movements of the face, body, and hands based on conversational dynamics. The key to its method lies in combining the sample diversity of vector quantization with the high-frequency details obtained from diffusion, resulting in more dynamic and expressive movements. The photorealistic avatars generated for visualizing the movements can express subtle nuances in poses (e.g., sneering and arrogance). To promote this research direction, we introduce a novel multi-view conversational dataset that enables photorealistic reconstruction. Experiments demonstrate that our model generates appropriate and diverse actions, outperforming diffusion and vector quantization-only methods. Furthermore, our perceptual evaluation highlights the importance of photorealism (compared to meshes) in accurately assessing subtle action details within conversational poses. Code and dataset are available online.

AI image generation

48.9K

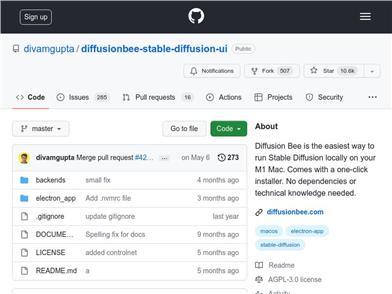

Diffusion Bee

Diffusion Bee is the easiest way to run a stable Diffusion model locally on your Intel/M1 Mac. It offers a one-click installer, requiring no dependencies or technical knowledge.

Diffusion Bee runs on your computer locally and will not send any data to the cloud (unless you choose to upload images).

Key Features:

- Image Conversion

- Image Repair

- Image Generation History

- Image Enlargement

- Multiple Image Sizes

- Optimized for M1/M2 chips

- Supports negative prompting and advanced prompting options

- Network Control

Diffusion Bee is a GUI wrapper for Stable Diffusion, so all Stable Diffusion terms apply to the outputs.

For more information, please visit the documentation.

System Requirements:

- Mac with Intel or M1/M2 chip

- For Intel chips: MacOS 12.3.1 or higher

- For M1/M2 chips: MacOS 11.0.0 or higher

License: Stable Diffusion is released under the CreativeML OpenRAIL M license.

AI image generation

100.7K

Featured AI Tools

Flow AI

Flow is an AI-driven movie-making tool designed for creators, utilizing Google DeepMind's advanced models to allow users to easily create excellent movie clips, scenes, and stories. The tool provides a seamless creative experience, supporting user-defined assets or generating content within Flow. In terms of pricing, the Google AI Pro and Google AI Ultra plans offer different functionalities suitable for various user needs.

Video Production

43.1K

Nocode

NoCode is a platform that requires no programming experience, allowing users to quickly generate applications by describing their ideas in natural language, aiming to lower development barriers so more people can realize their ideas. The platform provides real-time previews and one-click deployment features, making it very suitable for non-technical users to turn their ideas into reality.

Development Platform

44.7K

Listenhub

ListenHub is a lightweight AI podcast generation tool that supports both Chinese and English. Based on cutting-edge AI technology, it can quickly generate podcast content of interest to users. Its main advantages include natural dialogue and ultra-realistic voice effects, allowing users to enjoy high-quality auditory experiences anytime and anywhere. ListenHub not only improves the speed of content generation but also offers compatibility with mobile devices, making it convenient for users to use in different settings. The product is positioned as an efficient information acquisition tool, suitable for the needs of a wide range of listeners.

AI

42.5K

Minimax Agent

MiniMax Agent is an intelligent AI companion that adopts the latest multimodal technology. The MCP multi-agent collaboration enables AI teams to efficiently solve complex problems. It provides features such as instant answers, visual analysis, and voice interaction, which can increase productivity by 10 times.

Multimodal technology

43.3K

Chinese Picks

Tencent Hunyuan Image 2.0

Tencent Hunyuan Image 2.0 is Tencent's latest released AI image generation model, significantly improving generation speed and image quality. With a super-high compression ratio codec and new diffusion architecture, image generation speed can reach milliseconds, avoiding the waiting time of traditional generation. At the same time, the model improves the realism and detail representation of images through the combination of reinforcement learning algorithms and human aesthetic knowledge, suitable for professional users such as designers and creators.

Image Generation

42.5K

Openmemory MCP

OpenMemory is an open-source personal memory layer that provides private, portable memory management for large language models (LLMs). It ensures users have full control over their data, maintaining its security when building AI applications. This project supports Docker, Python, and Node.js, making it suitable for developers seeking personalized AI experiences. OpenMemory is particularly suited for users who wish to use AI without revealing personal information.

open source

42.8K

Fastvlm

FastVLM is an efficient visual encoding model designed specifically for visual language models. It uses the innovative FastViTHD hybrid visual encoder to reduce the time required for encoding high-resolution images and the number of output tokens, resulting in excellent performance in both speed and accuracy. FastVLM is primarily positioned to provide developers with powerful visual language processing capabilities, applicable to various scenarios, particularly performing excellently on mobile devices that require rapid response.

Image Processing

41.7K

Chinese Picks

Liblibai

LiblibAI is a leading Chinese AI creative platform offering powerful AI creative tools to help creators bring their imagination to life. The platform provides a vast library of free AI creative models, allowing users to search and utilize these models for image, text, and audio creations. Users can also train their own AI models on the platform. Focused on the diverse needs of creators, LiblibAI is committed to creating inclusive conditions and serving the creative industry, ensuring that everyone can enjoy the joy of creation.

AI Model

6.9M