Story To Motion

Overview :

Story-to-Motion is a novel task that takes a story (top green area) and generates motion and trajectories consistent with the textual description. The system utilizes modern large language models as a text-driven motion scheduler, extracting a series of (text, location) pairs from long texts. It also develops a text-driven motion retrieval scheme, combining classic motion matching with motion semantics and trajectory constraints. Furthermore, it designs a progressive masking transformer to address common problems in transition motions, such as unnatural poses and sliding. The system excels in three different subtasks: trajectory following, temporal action combination, and action mixing, outperforming previous motion synthesis methods.

Target Users :

Applicable to animation, gaming, and film industries, especially in scenarios where characters need to move to different locations and perform specific actions based on textual descriptions.

Use Cases

Game Development: Story-to-Motion can be used in game development to generate character animations based on game plot texts.

Filmmaking: In film production, it can automatically generate character actions based on the script, improving production efficiency.

Animation Design: Animation designers can utilize Story-to-Motion to synthesize character animations from texts, saving creative time.

Features

Synthesize infinite controllable character animations from long texts

Utilize large language models for text-driven motion scheduling

Develop a text-driven motion retrieval scheme, combining classic motion matching and motion semantics

Design a progressive masking transformer to address problems in transition motions

Featured AI Tools

Sora

AI video generation

17.0M

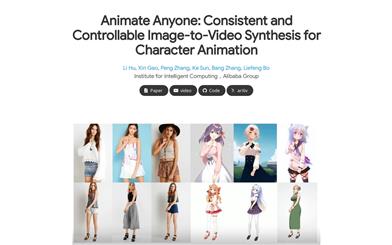

Animate Anyone

Animate Anyone aims to generate character videos from static images driven by signals. Leveraging the power of diffusion models, we propose a novel framework tailored for character animation. To maintain consistency of complex appearance features present in the reference image, we design ReferenceNet to merge detailed features via spatial attention. To ensure controllability and continuity, we introduce an efficient pose guidance module to direct character movements and adopt an effective temporal modeling approach to ensure smooth cross-frame transitions between video frames. By extending the training data, our method can animate any character, achieving superior results in character animation compared to other image-to-video approaches. Moreover, we evaluate our method on benchmarks for fashion video and human dance synthesis, achieving state-of-the-art results.

AI video generation

11.4M