Best 11 AI Model Evaluation Tools of 2025

MLE Bench

MLE-bench is a benchmark test launched by OpenAI to measure the performance of AI agents in the domain of machine learning engineering. It compiles 75 diverse challenges from Kaggle-related machine learning engineering competitions, testing real-world skills such as model training, dataset preparation, and experiment execution. Using publicly available leaderboard data from Kaggle, human benchmarks for each competition are established. Various cutting-edge language models are evaluated against this benchmark using open-source agent frameworks, revealing that the best-performing setup—OpenAI's o1-preview paired with the AIDE framework—achieved at least Kaggle bronze medal levels in 16.9% of the competitions. Moreover, the study examines various resource extension forms of AI agents and the effects of pre-training contamination. The benchmark code for MLE-bench has been open-sourced to facilitate future understanding of AI agents' capabilities in machine learning engineering.

AI Model Evaluation

46.1K

Fresh Picks

SWE Bench Verified

SWE-bench Verified is a subset of SWE-bench released by OpenAI that has been manually verified to reliably assess the ability of AI models to solve real-world software issues. It challenges AI to generate patches that resolve the described problems by providing code repositories and problem descriptions. This tool has been developed to improve the accuracy of evaluating the model's ability to autonomously perform software engineering tasks and is a key component of OpenAI's medium-risk framework.

AI Model Evaluation

58.8K

Turtle Benchmark

Turtle Benchmark is a new, cheat-proof benchmark based on the 'Turtle Soup' game, focusing on the assessment of large language models (LLMs) in terms of logical reasoning and context comprehension. By eliminating the need for background knowledge, it provides objective and unbiased test results with quantifiable outcomes, ensuring that models cannot be 'gamed' through the use of real user-generated questions.

AI Model Evaluation

45.8K

English Picks

Scale Leaderboard

Scale Leaderboard is a platform dedicated to AI model performance evaluation, offering expert-driven private evaluation datasets to ensure the fairness and purity of results. The platform regularly updates its rankings, incorporating new datasets and models, fostering a dynamic competitive environment. Evaluations are conducted by vetted experts using domain-specific methodologies, guaranteeing high quality and trustworthiness.

AI Model Evaluation

53.3K

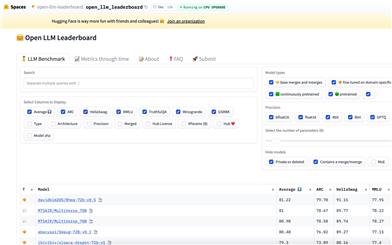

Open LLM Leaderboard

Open LLM Leaderboard, hosted by Hugging Face, is a space designed to showcase and compare the performance of various large language models. It offers a platform for developers, researchers, and enterprises to view the performance of different models on specific tasks, ultimately helping users choose the model best suited to their needs.

AI Model Evaluation

60.7K

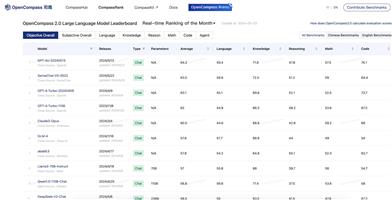

Opencompass 2.0 Large Language Model Leaderboard

OpenCompass 2.0 is a platform dedicated to evaluating the performance of large language models. It utilizes multiple closed-source datasets for multi-dimensional assessments, providing models with an overall average score and specialized skill scores. The platform helps developers and researchers understand the performance of different models in areas like language, knowledge, reasoning, mathematics, and programming through its real-time updated leaderboard.

AI Model Evaluation

62.7K

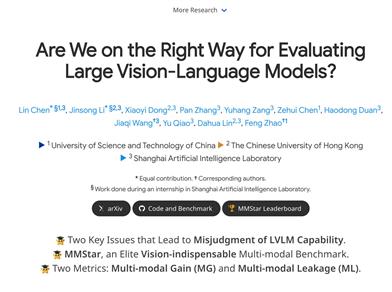

Mmstar

MMStar is a benchmark dataset designed to assess the multimodal capabilities of large visual language models. It comprises 1500 carefully selected visual language samples, covering 6 core abilities and 18 sub-dimensions. Each sample has undergone human review, ensuring visual dependency, minimizing data leakage, and requiring advanced multimodal capabilities for resolution. In addition to traditional accuracy metrics, MMStar proposes two new metrics to measure data leakage and the practical performance gains of multimodal training. Researchers can use MMStar to evaluate the multimodal capabilities of visual language models across multiple tasks and leverage the new metrics to discover potential issues within models.

AI Model Evaluation

52.7K

Multi Modal Large Language Models

This tool aims to assess the generalization ability, trustworthiness, and causal reasoning abilities of the latest proprietary and open-source MLLMs through qualitative research from four modalities: text, code, images, and videos. This is done to increase the transparency of MLLMs. We believe these attributes are representative factors defining the reliability of MLLMs, supporting various downstream applications. Specifically, we evaluated closed-source GPT-4 and Gemini, as well as 6 open-source LLMs and MLLMs. Overall, we evaluated 230 manually designed cases, with qualitative results summarized into 12 scores (i.e., 4 modalities multiplied by 3 attributes). In total, we revealed 14 empirical findings that contribute to understanding the capabilities and limitations of proprietary and open-source MLLMs, enabling more reliable support for multi-modal downstream applications.

AI Model Evaluation

46.9K

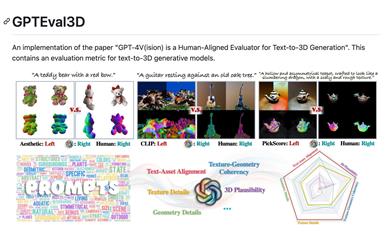

Gpteval3d

GPTEval3D is an open-source tool for evaluating 3D generation models. Based on GPT-4V, it enables automatic evaluation of text-to-3D generation models. It can calculate the ELO score of the generated models and compare them with existing models for ranking. This user-friendly tool supports custom evaluation datasets, allowing users to fully leverage the evaluation capabilities of GPT-4V. It serves as a powerful tool for researching 3D generation tasks.

AI Model Evaluation

75.3K

Deepmark AI

Deepmark AI is a benchmark tool for evaluating large language models (LLMs) that allows you to assess a variety of task-specific metrics on your own data. It comes pre-integrated with leading generative AI APIs like GPT-4, Anthropic, GPT-3.5 Turbo, Cohere, and AI21.

AI Model Evaluation

49.4K

Deepeval

DeepEval provides a range of metrics to assess the quality of LLM's answers to ensure they are relevant, consistent, unbiased, and non-toxic. These can be easily integrated into CI/CD pipelines, enabling machine learning engineers to quickly assess and verify the performance of their LLM applications during iterative improvements. DeepEval offers a Python-friendly offline evaluation method, ensuring your pipeline is ready for production. It's like 'Pytest for your pipeline', making the process of production and evaluation as straightforward as passing all tests.

AI Model Evaluation

158.7K

Featured AI Tools

Flow AI

Flow is an AI-driven movie-making tool designed for creators, utilizing Google DeepMind's advanced models to allow users to easily create excellent movie clips, scenes, and stories. The tool provides a seamless creative experience, supporting user-defined assets or generating content within Flow. In terms of pricing, the Google AI Pro and Google AI Ultra plans offer different functionalities suitable for various user needs.

Video Production

43.1K

Nocode

NoCode is a platform that requires no programming experience, allowing users to quickly generate applications by describing their ideas in natural language, aiming to lower development barriers so more people can realize their ideas. The platform provides real-time previews and one-click deployment features, making it very suitable for non-technical users to turn their ideas into reality.

Development Platform

46.4K

Listenhub

ListenHub is a lightweight AI podcast generation tool that supports both Chinese and English. Based on cutting-edge AI technology, it can quickly generate podcast content of interest to users. Its main advantages include natural dialogue and ultra-realistic voice effects, allowing users to enjoy high-quality auditory experiences anytime and anywhere. ListenHub not only improves the speed of content generation but also offers compatibility with mobile devices, making it convenient for users to use in different settings. The product is positioned as an efficient information acquisition tool, suitable for the needs of a wide range of listeners.

AI

43.9K

Minimax Agent

MiniMax Agent is an intelligent AI companion that adopts the latest multimodal technology. The MCP multi-agent collaboration enables AI teams to efficiently solve complex problems. It provides features such as instant answers, visual analysis, and voice interaction, which can increase productivity by 10 times.

Multimodal technology

45.3K

Chinese Picks

Tencent Hunyuan Image 2.0

Tencent Hunyuan Image 2.0 is Tencent's latest released AI image generation model, significantly improving generation speed and image quality. With a super-high compression ratio codec and new diffusion architecture, image generation speed can reach milliseconds, avoiding the waiting time of traditional generation. At the same time, the model improves the realism and detail representation of images through the combination of reinforcement learning algorithms and human aesthetic knowledge, suitable for professional users such as designers and creators.

Image Generation

44.7K

Openmemory MCP

OpenMemory is an open-source personal memory layer that provides private, portable memory management for large language models (LLMs). It ensures users have full control over their data, maintaining its security when building AI applications. This project supports Docker, Python, and Node.js, making it suitable for developers seeking personalized AI experiences. OpenMemory is particularly suited for users who wish to use AI without revealing personal information.

open source

44.2K

Fastvlm

FastVLM is an efficient visual encoding model designed specifically for visual language models. It uses the innovative FastViTHD hybrid visual encoder to reduce the time required for encoding high-resolution images and the number of output tokens, resulting in excellent performance in both speed and accuracy. FastVLM is primarily positioned to provide developers with powerful visual language processing capabilities, applicable to various scenarios, particularly performing excellently on mobile devices that require rapid response.

Image Processing

42.0K

Chinese Picks

Liblibai

LiblibAI is a leading Chinese AI creative platform offering powerful AI creative tools to help creators bring their imagination to life. The platform provides a vast library of free AI creative models, allowing users to search and utilize these models for image, text, and audio creations. Users can also train their own AI models on the platform. Focused on the diverse needs of creators, LiblibAI is committed to creating inclusive conditions and serving the creative industry, ensuring that everyone can enjoy the joy of creation.

AI Model

6.9M