# Visual Reasoning

Chinese Picks

QVQ Max

QVQ-Max is a visual reasoning model launched by the Qwen team, capable of understanding and analyzing image and video content to provide solutions. It is not limited to text input but can also handle complex visual information. Suitable for users who need multi-modal information processing, such as in education, work, and life scenarios. This product is developed based on deep learning and computer vision technology and is suitable for students, professionals, and creative individuals. This is the initial release, and subsequent optimizations will be continuous.

AI Model

60.2K

Aya Vision 32B

Aya Vision 32B is an advanced vision-language model developed by Cohere For AI, boasting 32 billion parameters and supporting 23 languages, including English, Chinese, and Arabic. This model combines the latest multilingual language model Aya Expanse 32B and the SigLIP2 vision encoder, achieving visual and language understanding integration through a multimodal adapter. It excels in the vision-language field, capable of handling complex image and text tasks such as OCR, image captioning, and visual reasoning. The release of this model aims to promote the popularization of multimodal research, providing a powerful tool for global researchers with its open-source weights. The model is licensed under CC-BY-NC and is subject to Cohere For AI's fair use policy.

AI Model

72.3K

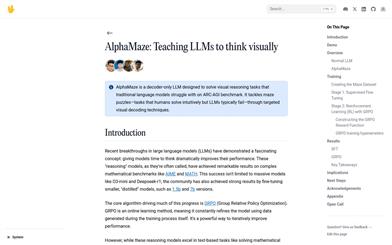

Alphamaze V0.2 1.5B

AlphaMaze is a project focused on enhancing the visual reasoning abilities of Large Language Models (LLMs). It trains models through maze tasks described in text format, enabling them to understand and plan in spatial structures. This method avoids complex image processing and directly assesses the model's spatial understanding through text descriptions. Its main advantage is the ability to reveal how the model thinks about spatial problems, rather than simply whether it can solve them. The model is based on open-source frameworks and aims to promote research and development of language models in the field of visual reasoning.

AI Model

54.6K

Alphamaze

AlphaMaze is a decoder language model designed specifically for solving visual reasoning tasks. It demonstrates the potential of language models in visual reasoning through training on maze-solving tasks. The model is built upon the 1.5 billion parameter Qwen model and is trained with Supervised Fine-Tuning (SFT) and Reinforcement Learning (RL). Its main advantage lies in its ability to transform visual tasks into text format for reasoning, thereby compensating for the lack of spatial understanding in traditional language models. The development background of this model is to improve AI performance in visual tasks, especially in scenarios requiring step-by-step reasoning. Currently, AlphaMaze is a research project, and its commercial pricing and market positioning have not yet been clearly defined.

AI Model

52.2K

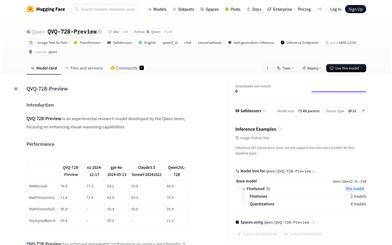

QVQ 72B Preview

QVQ-72B-Preview is an experimental research model developed by the Qwen team, focusing on enhancing visual reasoning capabilities. The model demonstrates strong abilities in multidisciplinary understanding and reasoning, achieving significant advances especially in mathematical reasoning tasks. Although advancements have been made in visual reasoning, it does not completely replace the capabilities of Qwen2-VL-72B, and may gradually lose focus on image content in multi-step visual reasoning, leading to hallucinations. Furthermore, QVQ does not show significantly better performance in basic recognition tasks compared to Qwen2-VL-72B.

AI Model

64.6K

Claude 3.5 Sonnet

Claude 3.5 Sonnet, developed by Anthropic, strikes a remarkable balance between intelligence, speed, and cost. This model sets new industry benchmarks in graduate-level reasoning, undergraduate-level knowledge, and programming proficiency. It excels at understanding nuances, humor, and complex instructions, and can generate high-quality content in a natural and friendly tone. Additionally, it demonstrates strong capabilities in visual reasoning, chart interpretation, and image-to-text transcription, making it an ideal choice for industries like retail, logistics, and financial services.

AI Model

128.1K

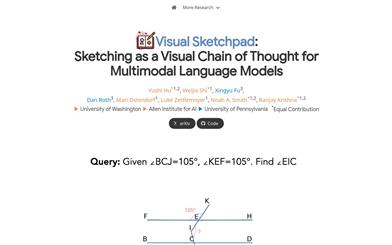

Visual Sketchpad

Visual Sketchpad is a framework that provides a visual sketchpad and drawing tools for multimodal large language models (LLMs). It allows models to operate on visually created elements while planning and reasoning, unlike previous methods that relied solely on text for reasoning steps. Visual Sketchpad enables models to draw using lines, boxes, annotations, and other more human-like drawing elements, thereby facilitating better reasoning. Additionally, it can incorporate expert vision models, such as object detection models for drawing bounding boxes or segmentation models for drawing masks, to further enhance visual perception and reasoning capabilities.

AI Model

58.0K

Fresh Picks

Cantor

Cantor is a multimodal chain-of-thought (CoT) framework that leverages a perception-decision architecture to combine visual context acquisition with logical reasoning, effectively solving complex visual reasoning tasks. Acting as a decision generator, Cantor integrates visual input to analyze images and questions, ensuring tighter alignment with real-world scenarios. Furthermore, Cantor utilizes the advanced cognitive capabilities of large language models (LLMs) as multi-faceted experts to deduce higher-level information, enriching the CoT generation process. Extensive experiments on two challenging visual reasoning datasets demonstrate the effectiveness of the proposed framework. Notably, Cantor achieves significant improvements in multimodal CoT performance without requiring fine-tuning or real-world reasoning, surpassing existing baselines."

AI Model

54.6K

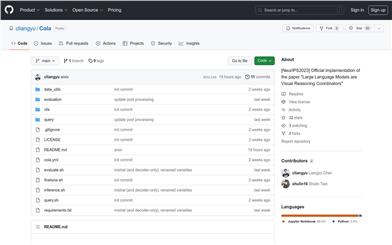

Cola

Cola is a method that uses a language model (LM) to aggregate the outputs of 2 or more vision-language models (VLMs). Our model assembly method is called Cola (COordinative LAnguage model or visual reasoning). Cola performs best when the LM is fine-tuned (called Cola-FT). Cola is also effective in zero-shot or few-shot context learning (called Cola-Zero). In addition to performance improvements, Cola is also more robust to VLM errors. We demonstrate that Cola can be applied to various VLMs (including large multimodal models like InstructBLIP) and 7 datasets (VQA v2, OK-VQA, A-OKVQA, e-SNLI-VE, VSR, CLEVR, GQA), and it consistently improves performance.

AI image detection and recognition

59.6K

Featured AI Tools

Chinese Picks

騰訊混元圖像 2.0

騰訊混元圖像 2.0 是騰訊最新發布的 AI 圖像生成模型,顯著提升了生成速度和畫質。通過超高壓縮倍率的編解碼器和全新擴散架構,使得圖像生成速度可達到毫秒級,避免了傳統生成的等待時間。同時,模型通過強化學習算法與人類美學知識的結合,提升了圖像的真實感和細節表現,適合設計師、創作者等專業用戶使用。

圖片生成

91.6K

English Picks

Lovart

Lovart 是一款革命性的 AI 設計代理,能夠將創意提示轉化為藝術作品,支持從故事板到品牌視覺的多種設計需求。其重要性在於打破傳統設計流程,節省時間並提升創意靈感。Lovart 當前處於測試階段,用戶可加入等候名單,隨時體驗設計的樂趣。

AI設計工具

73.7K

Fastvlm

FastVLM 是一種高效的視覺編碼模型,專為視覺語言模型設計。它通過創新的 FastViTHD 混合視覺編碼器,減少了高分辨率圖像的編碼時間和輸出的 token 數量,使得模型在速度和精度上表現出色。FastVLM 的主要定位是為開發者提供強大的視覺語言處理能力,適用於各種應用場景,尤其在需要快速響應的移動設備上表現優異。

AI模型

56.3K

Keysync

KeySync 是一個針對高分辨率視頻的無洩漏唇同步框架。它解決了傳統唇同步技術中的時間一致性問題,同時通過巧妙的遮罩策略處理表情洩漏和麵部遮擋。KeySync 的優越性體現在其在唇重建和跨同步方面的先進成果,適用於自動配音等實際應用場景。

視頻編輯

54.9K

Manus

Manus 是由 Monica.im 研發的全球首款真正自主的 AI 代理產品,能夠直接交付完整的任務成果,而不僅僅是提供建議或答案。它採用 Multiple Agent 架構,運行在獨立虛擬機中,能夠通過編寫和執行代碼、瀏覽網頁、操作應用等方式直接完成任務。Manus 在 GAIA 基準測試中取得了 SOTA 表現,展現了強大的任務執行能力。其目標是成為用戶在數字世界的‘代理人’,幫助用戶高效完成各種複雜任務。

個人助理

1.5M

Trae國內版

Trae是一款專為中文開發場景設計的AI原生IDE,將AI技術深度集成於開發環境中。它通過智能代碼補全、上下文理解等功能,顯著提升開發效率和代碼質量。Trae的出現填補了國內AI集成開發工具的空白,滿足了中文開發者對高效開發工具的需求。其定位為高端開發工具,旨在為專業開發者提供強大的技術支持,目前尚未明確公開價格,但預計會採用付費模式以匹配其高端定位。

開發與工具

145.7K

English Picks

Pika

Pika是一個視頻製作平臺,用戶可以上傳自己的創意想法,Pika會自動生成相關的視頻。主要功能有:支持多種創意想法轉視頻,視頻效果專業,操作簡單易用。平臺採用免費試用模式,定位面向創意者和視頻愛好者。

視頻生成

18.7M

Chinese Picks

Liblibai

LiblibAI是一箇中國領先的AI創作平臺,提供強大的AI創作能力,幫助創作者實現創意。平臺提供海量免費AI創作模型,用戶可以搜索使用模型進行圖像、文字、音頻等創作。平臺還支持用戶訓練自己的AI模型。平臺定位於廣大創作者用戶,致力於創造條件普惠,服務創意產業,讓每個人都享有創作的樂趣。

AI模型

8.0M