# Self-Supervised Learning

SHMT

SHMT is a self-supervised hierarchical makeup transfer technology achieved through latent diffusion models. This technology allows for the natural transfer of one facial makeup to another without the need for explicit labeling. Its main advantages include the ability to handle complex facial features and expression changes, providing high-quality transfer results. This technology has been accepted at NeurIPS 2024, showcasing its innovation and practicality in the field of image processing.

AI design tools

49.1K

1.58 Bit FLUX

1.58-bit FLUX is an advanced text-to-image generation model that employs 1.58-bit weights (values of {-1, 0, +1}) to quantify the FLUX.1-dev model while maintaining comparable performance for generating 1024x1024 images. This method does not require access to image data and relies entirely on the self-supervision of the FLUX.1-dev model. Additionally, a custom kernel has been developed to optimize 1.58-bit operations, achieving a 7.7x reduction in model storage, a 5.1x decrease in inference memory, and improved inference latency. Extensive evaluations in GenEval and T2I Compbench benchmarks show that 1.58-bit FLUX significantly enhances computational efficiency while maintaining generation quality.

Image Generation

80.9K

Video Foley

Video-Foley is an innovative system for generating sound from video. It employs root mean square (RMS) as a temporal event condition, combined with semantic tonal prompts (audio or text), to achieve high control and synchronization in video sound synthesis. The system utilizes an unsupervised learning framework that requires no manual labeling, consisting of two stages: Video2RMS and RMS2Sound, incorporating novel concepts such as RMS discretization and RMS-ControlNet, in conjunction with a pre-trained text-to-audio model. Video-Foley achieves state-of-the-art performance in aligning and controlling sound timing, intensity, timbre, and detail.

AI video generation

59.1K

Fresh Picks

HOI Swap

HOI-Swap is a diffusion model-based video editing framework specializing in tackling the complexities of hand-object interactions in video editing. This model, trained through self-supervision, enables seamless object swapping within a single frame. It also learns to adjust hand interaction patterns based on object attribute changes, such as grip style. The second stage extends single-frame editing to an entire video sequence, achieving high-quality video editing through motion alignment and video generation.

Video Editing

56.9K

Fresh Picks

Mimicbrush

MimicBrush is an innovative image editing model that allows users to achieve zero-shot image editing by specifying the editing area in the source image and providing a reference image. The model can automatically capture the semantic correspondence between the two images and complete the editing in one step. MimicBrush is developed based on diffusion priors, capturing semantic relationships between different images through self-supervised learning, and experimental results demonstrate its effectiveness and superiority in various test cases.

AI image editing

478.3K

Denseav

DenseAV is a novel dual-encoder localization architecture that learns high-resolution, semantically meaningful audio-visual alignment features by observing videos. It can discover the "meaning" of words and the "location" of sounds without requiring explicit localization supervision, and automatically discovers and distinguishes between these two types of associations. DenseAV's localization capability stems from a new multi-head feature aggregation operator, which directly compares dense image and audio representations through contrastive learning. Additionally, DenseAV significantly outperforms previous art on semantic segmentation tasks and surpasses ImageBind in cross-modal retrieval using less than half the parameters.

Video Editing

56.6K

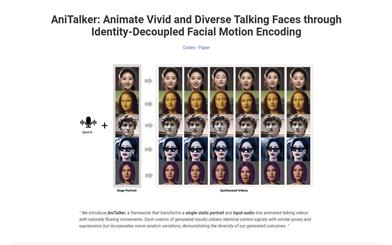

Anitalker

AniTalker is an innovative framework that can generate realistic dialogue facial animations from a single portrait. It enhances expressive motion capture through two self-supervised learning strategies, and develops an identity encoder through metric learning, effectively reducing the need for labeled data. AniTalker not only creates detailed and realistic facial expressions but also emphasizes its potential in real-world applications for producing dynamic avatars.

AI head portrait generation

122.5K

Miqu 1 70b

Miqu 1-70b is an open-source large language model that utilizes novel self-supervised learning methods, capable of handling a variety of natural language tasks. With 170 billion parameters, it supports multiple prompt formats and allows for fine-tuning to generate high-quality text. Its strong comprehension and generation capabilities make it suitable for applications in chatbots, text summarization, question answering systems, and other domains.

AI Model

122.5K

A Vision Check Up

This paper systematically evaluates the ability of large language models (LLMs) to generate and recognize increasingly complex visual concepts, and demonstrates how to train initial visual representation learning systems using text models. Although language models cannot directly process pixel-level visual information, this research utilizes code representations of images. While LLM-generated images are not like natural images, the results on image generation and correction suggest that accurately modeling strings can teach language models much about the visual world. Furthermore, experiments on self-supervised visual representation learning using text-model generated images highlight the potential of training visual models capable of semantic evaluation on natural images using only LLMs.

AI Science Research

52.2K

Featured AI Tools

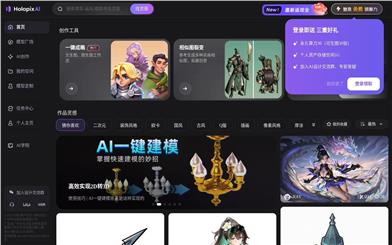

Holopix AI

Holopix AI is an online platform that provides efficient solutions for game art design, using AI technology to generate characters, scenes, and orthographic views with one click and rapid modeling, greatly enhancing the creation efficiency. This product is suitable for game development teams and independent designers, offering a rich selection of style models and supporting multiple creation tools to help users quickly realize their creative ideas. After registration, you can enjoy several exclusive game-style models. Its positioning is to lower the entry barrier for game art creation through AI technology, providing users with a more efficient design experience.

AI Design

37.0K

Opencut

OpenCut is an open-source online video editor that focuses on simplicity and powerful features, running smoothly on any platform. Its goal is to provide users with an easy-to-use and feature-rich video editing tool, suitable for video creators, content creators, and educators. As a free tool, OpenCut allows users to efficiently complete video editing tasks.

Open Source

37.0K

AI Gist

AI Gist is an AI prompt management tool focused on privacy protection, designed to help users effectively create, organize, and use AI prompts. Its core features include variable replacement, Jinja template support, and AI generation and optimization, allowing users to manage data locally and ensure privacy and security. It also supports multiple platforms and languages, making it suitable for various users.

Prompt Tool

37.0K

Nocode

NoCode is a platform that requires no programming experience, allowing users to quickly generate applications by describing their ideas in natural language, aiming to lower development barriers so more people can realize their ideas. The platform provides real-time previews and one-click deployment features, making it very suitable for non-technical users to turn their ideas into reality.

Development Platform

78.4K

Listenhub

ListenHub is a lightweight AI podcast generation tool that supports both Chinese and English. Based on cutting-edge AI technology, it can quickly generate podcast content of interest to users. Its main advantages include natural dialogue and ultra-realistic voice effects, allowing users to enjoy high-quality auditory experiences anytime and anywhere. ListenHub not only improves the speed of content generation but also offers compatibility with mobile devices, making it convenient for users to use in different settings. The product is positioned as an efficient information acquisition tool, suitable for the needs of a wide range of listeners.

AI

63.2K

Minimax Agent

MiniMax Agent is an intelligent AI companion that adopts the latest multimodal technology. The MCP multi-agent collaboration enables AI teams to efficiently solve complex problems. It provides features such as instant answers, visual analysis, and voice interaction, which can increase productivity by 10 times.

Multimodal technology

77.0K

Openmemory MCP

OpenMemory is an open-source personal memory layer that provides private, portable memory management for large language models (LLMs). It ensures users have full control over their data, maintaining its security when building AI applications. This project supports Docker, Python, and Node.js, making it suitable for developers seeking personalized AI experiences. OpenMemory is particularly suited for users who wish to use AI without revealing personal information.

open source

85.8K

Chinese Picks

Liblibai

LiblibAI is a leading Chinese AI creative platform offering powerful AI creative tools to help creators bring their imagination to life. The platform provides a vast library of free AI creative models, allowing users to search and utilize these models for image, text, and audio creations. Users can also train their own AI models on the platform. Focused on the diverse needs of creators, LiblibAI is committed to creating inclusive conditions and serving the creative industry, ensuring that everyone can enjoy the joy of creation.

AI Model

7.0M