# Inference

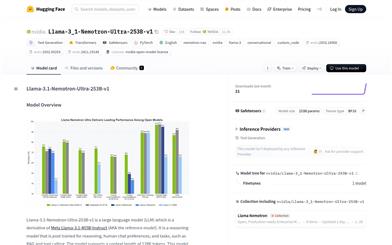

Llama 3.1 Nemotron Ultra 253B

Llama-3.1-Nemotron-Ultra-253B-v1 is a large language model based on Llama-3.1-405B-Instruct, which has undergone multi-stage post-training to enhance reasoning and chat capabilities. This model supports context lengths up to 128K, offering a good balance between accuracy and efficiency. Suitable for commercial use, it aims to provide developers with powerful AI assistant functionality.

AI Model

49.1K

Huginn 0125

Huginn-0125 is a latent variable recurrent deep model developed by the Tom Goldstein Lab at the University of Maryland, College Park. This model, trained on 800 billion tokens, showcases exceptional performance in inference and code generation with its 3.5 billion parameters. Its core feature is the dynamic adjustment of computation at test time through a recurrent deep structure, allowing for flexible adaptation of computation steps based on task requirements, thereby optimizing resource utilization while maintaining performance. The model is available on the open-source Hugging Face platform, supporting community sharing and collaboration, allowing users to download, use, and further develop it freely. Its open-source nature and flexible architecture make it a vital tool in research and development, particularly in resource-constrained situations or where high-performance inference is necessary.

Coding Assistant

73.1K

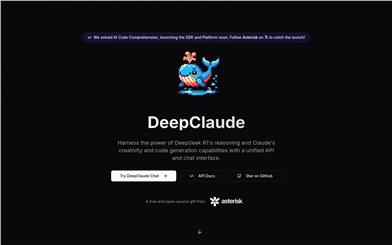

Deepclaude

DeepClaude is a powerful AI tool designed to combine the inference capabilities of DeepSeek R1 with Claude's creativity and code generation abilities. It provides services through a unified API and chat interface, utilizing a high-performance streaming API (written in Rust) for instant responses, while supporting end-to-end encryption and local API key management to ensure user data privacy and security. The product is fully open-source, allowing users to freely contribute, modify, and deploy. Its main advantages include zero latency responses, high configurability, and support for bring-your-own-key (BYOK), providing developers with exceptional flexibility and control. DeepClaude targets developers and enterprises needing efficient code generation and AI inference capabilities, currently in a free trial phase with potential future usage-based pricing.

Development & Tools

101.6K

Gemini Flash Thinking

Gemini Flash Thinking is the latest AI model launched by Google DeepMind, specifically designed for complex tasks. It can demonstrate the inference process, helping users better understand the decision-making logic of the model. This model excels in mathematics and science domains, supporting long-text analysis and code execution capabilities. It aims to provide developers with powerful tools to advance the application of artificial intelligence in complex tasks.

Research Instruments

58.2K

Deepseek R1 Distill Qwen 14B

DeepSeek-R1-Distill-Qwen-14B is a distilled model developed by the DeepSeek team based on Qwen-14B, focusing on inference and text generation tasks. This model significantly enhances inference capability and generation quality through large-scale reinforcement learning and data distillation techniques while reducing computational resource requirements. Its main advantages include high performance, low resource consumption, and broad applicability, making it suitable for scenarios requiring efficient inference and text generation.

AI Model

280.4K

English Picks

Tost AI

Tost AI is a free, non-profit, open-source service that provides inference for the latest AI research papers, utilizing a non-profit GPU cluster. Tost AI does not store any inference data; all data expires within 12 hours. Additionally, it offers the option to send data to a Discord channel. Each account receives 100 free wallet credits daily. If you wish to earn 1100 daily wallet credits, you can subscribe as a GitHub sponsor or through Patreon. All profits from Tost AI are directed to the first author of the papers, funded by corporate and individual sponsors.

Research tools

99.1K

English Picks

Vllm

vLLM is a fast, easy-to-use, and efficient library for large language model (LLM) inference and service provision. By leveraging the latest service throughput technologies, efficient memory management, continuous batch processing requests, CUDA/HIP graph fast model execution, quantization techniques, and optimized CUDA kernels, it provides high-performance inference services. vLLM seamlessly integrates with popular HuggingFace models, supports various decoding algorithms including parallel sampling and beam search, supports tensor parallelism for distributed inference, supports streaming output, and is compatible with OpenAI API servers. Moreover, vLLM supports both NVIDIA and AMD GPUs, as well as experimental prefix caching and multi-lora support.

Development and Tools

67.9K

Fireworks AI

Fireworks collaborates with world-leading generative AI researchers to deliver the best models at the fastest pace. Featuring carefully curated and optimized models from Fireworks, alongside enterprise-grade throughput and expert technical support. Position itself as the fastest and most reliable AI platform.

Model Training and Deployment

144.6K

Opendit

OpenDiT is an open-source project providing a high-performance implementation of Diffusion Transformer (DiT) based on Colossal-AI. It is designed to enhance the training and inference efficiency of DiT applications, including text-to-video and text-to-image generation. OpenDiT achieves performance improvements through the following technologies:

* GPU acceleration up to 80% and 50% memory reduction;

* Core optimizations including FlashAttention, Fused AdaLN, and Fused layernorm;

* Mixed parallelism methods such as ZeRO, Gemini, and DDP, along with model sharding for ema models to further reduce memory costs;

* FastSeq: A novel sequence parallelism method particularly suitable for workloads like DiT, where activations are large but parameters are small. Single-node sequence parallelism can save up to 48% in communication costs and break through the memory limit of a single GPU, reducing overall training and inference time;

* Significant performance improvements can be achieved with minimal code modifications;

* Users do not need to understand the implementation details of distributed training;

* Complete text-to-image and text-to-video generation workflows;

* Researchers and engineers can easily use and adapt our workflows to real-world applications without modifying the parallelism part;

* Training on ImageNet for text-to-image generation and releasing checkpoints.

AI model training and inference

133.9K

Efficient LLM

This is an efficient LLM inference solution implemented on Intel GPUs. By simplifying the LLM decoder layer, utilizing segment KV caching strategies, and implementing a custom Scaled-Dot-Product-Attention kernel, this solution achieves up to 7x lower token latency and 27x higher throughput on Intel GPUs compared to the standard HuggingFace implementation. For detailed features, advantages, pricing, and positioning information, please refer to the official website.

AI model inference training

49.7K

English Picks

Flash Decoding

Flash-Decoding is a technique for long-context inference that can significantly accelerate the attention mechanism during inference, leading to an 8x improvement in generation speed. This technique achieves faster inference speed by parallelly loading keys and values and then rescaling and combining the results to maintain the correct attention output. Flash-Decoding is suitable for large language models and can handle long contexts such as long documents, long conversations, or entire codebases. Flash-Decoding is available in the FlashAttention package and xFormers, which can automatically select between Flash-Decoding and FlashAttention methods. It can also utilize the efficient Triton kernel.

AI Model

98.5K

Stability AI Generation Models

Stability AI Generation Models is an open-source generation model library providing functions for training, inference, and application of various generation models. The library supports training diverse generation models, including PyTorch Lightning-based training, offering rich configuration options and a modular design. Users can leverage this library to train generation models and perform inference and application using the provided models. The library also includes example training configurations and data processing functionalities, facilitating quick start and customization for users.

AI Model

65.7K

Featured AI Tools

Chinese Picks

Nocode

NoCode 是一款无需编程经验的平台,允许用户通过自然语言描述创意并快速生成应用,旨在降低开发门槛,让更多人能实现他们的创意。该平台提供实时预览和一键部署功能,非常适合非技术背景的用户,帮助他们将想法转化为现实。

开发平台

148.5K

Fresh Picks

Listenhub

ListenHub 是一款轻量级的 AI 播客生成工具,支持中文和英语,基于前沿 AI 技术,能够快速生成用户感兴趣的播客内容。其主要优点包括自然对话和超真实人声效果,使得用户能够随时随地享受高品质的听觉体验。ListenHub 不仅提升了内容生成的速度,还兼容移动端,便于用户在不同场合使用。产品定位为高效的信息获取工具,适合广泛的听众需求。

音频生成

112.1K

English Picks

Lovart

Lovart 是一款革命性的 AI 设计代理,能够将创意提示转化为艺术作品,支持从故事板到品牌视觉的多种设计需求。其重要性在于打破传统设计流程,节省时间并提升创意灵感。Lovart 当前处于测试阶段,用户可加入等候名单,随时体验设计的乐趣。

AI设计工具

130.8K

Fastvlm

FastVLM 是一种高效的视觉编码模型,专为视觉语言模型设计。它通过创新的 FastViTHD 混合视觉编码器,减少了高分辨率图像的编码时间和输出的 token 数量,使得模型在速度和精度上表现出色。FastVLM 的主要定位是为开发者提供强大的视觉语言处理能力,适用于各种应用场景,尤其在需要快速响应的移动设备上表现优异。

AI模型

99.1K

English Picks

Smart PDFs

Smart PDFs 是一个在线工具,利用 AI 技术快速分析 PDF 文档,并生成简明扼要的总结。它适合需要快速获取文档要点的用户,如学生、研究人员和商务人士。该工具使用 Llama 3.3 模型,支持多种语言,是提高工作效率的理想选择,完全免费使用。

文章摘要

65.4K

Keysync

KeySync 是一个针对高分辨率视频的无泄漏唇同步框架。它解决了传统唇同步技术中的时间一致性问题,同时通过巧妙的遮罩策略处理表情泄漏和面部遮挡。KeySync 的优越性体现在其在唇重建和跨同步方面的先进成果,适用于自动配音等实际应用场景。

视频编辑

89.1K

Anyvoice

AnyVoice是一款领先的AI声音生成器,采用先进的深度学习模型,将文本转换为与人类无法区分的自然语音。其主要优点包括超真实的声音效果、多语言支持、快速生成能力以及语音定制功能。该产品适用于多种场景,如内容创作、教育、商业和娱乐制作等,旨在为用户提供高效、便捷的语音生成解决方案。目前产品提供免费试用,适合不同层次的用户。

音频生成

661.0K

Chinese Picks

Liblibai

LiblibAI是一个中国领先的AI创作平台,提供强大的AI创作能力,帮助创作者实现创意。平台提供海量免费AI创作模型,用户可以搜索使用模型进行图像、文字、音频等创作。平台还支持用户训练自己的AI模型。平台定位于广大创作者用户,致力于创造条件普惠,服务创意产业,让每个人都享有创作的乐趣。

AI模型

8.0M