# Audio-driven

Liteavatar

LiteAvatar is an audio-driven real-time 2D avatar generation model primarily designed for real-time chat scenarios. Through efficient speech recognition and viseme parameter prediction technology combined with a lightweight 2D face generation model, it achieves 30fps real-time inference using only CPU. Key advantages include efficient audio feature extraction, a lightweight model design, and mobile device-friendly support. This technology is suitable for real-time interactive virtual avatar generation scenarios such as online meetings and virtual live streaming. It was developed based on the need for real-time interaction and low hardware requirements. Currently, it is open-source and free, positioned as an efficient, low-resource-consuming real-time avatar generation solution.

Chatbot

72.9K

Syncanimation

SyncAnimation is an innovative audio-driven technology capable of real-time generation of highly realistic speaking avatars and upper body movements. By combining audio with synchronized pose and expression techniques, it addresses the shortcomings of traditional methods in terms of real-time performance and detail representation. This technology primarily targets application scenarios that require high-quality real-time animation generation, such as virtual streaming, online education, remote conferencing, and holds significant practical value. Its pricing and specific market positioning have yet to be determined.

Digital Human

55.2K

FLOAT

FLOAT is an audio-driven avatar video generation technique that utilizes a flow matching generative model, transitioning the generative modeling from pixel-based latent space to learned motion latent space, achieving temporally coherent motion design. This technology incorporates a transformer-based vector field predictor and features a straightforward yet effective per-frame conditioning mechanism. Additionally, FLOAT supports speech-driven emotional enhancement, allowing for the natural integration of expressive motion. Extensive experiments demonstrate that FLOAT outperforms existing audio-driven avatar methods in visual quality, motion fidelity, and efficiency.

Video Production

61.3K

Hallo2

Hallo2 is a facial animation technology based on a latent diffusion generative model, generating high-resolution, long-duration videos driven by audio. It expands upon Hallo's capabilities by incorporating several design improvements, including the generation of long videos, 4K resolution outputs, and enhanced expression control through textual prompts. Key advantages of Hallo2 include high-resolution output, long-duration stability, and enhanced control via textual prompts, making it significantly beneficial for generating diverse and rich portrait animation content.

AI image generation

76.7K

Loopy Model

Loopy is an end-to-end audio-driven video diffusion model that features time modules designed for cross-clip and intra-clip interactions, as well as an audio-to-latent representation module. This enables the model to leverage long-term motion information within the data to learn natural movement patterns and enhance the correlation between audio and portrait motion. This approach eliminates the need for manually specified spatial motion templates required by existing methods, achieving more realistic and high-quality results across various scenarios.

AI video generation

114.0K

Aniportrait

AniPortrait is a project that generates dynamic videos of speaking and singing faces based on audio and image input. It can create realistic facial animations synchronized with audio and static face images. Supports multiple languages and facial redrawing, head pose control. Features include audio-driven animation synthesis, facial reenactment, head pose control, support for self-driven and audio-driven video generation, high-quality animation generation, and flexible model and weight configuration.

AI video generation

704.9K

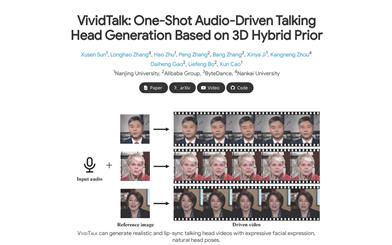

Vividtalk

VividTalk is a one-shot audio-driven avatar generation technique based on 3D mixed prior. It can generate realistic rap videos with rich expressions, natural head poses, and lip synchronization. This technique adopts a two-stage general framework to generate high-quality rap videos with all the above characteristics. Specifically, in the first stage, audio is mapped to a mesh by learning two types of motion (non-rigid facial motion and rigid head motion). For facial motion, a mixed shape and vertex representation is used as an intermediate representation to maximize the model's representational capability. For natural head motion, a novel learnable head posebook is proposed, and a two-stage training mechanism is adopted. In the second stage, a dual-branch motion VAE and a generator are proposed to convert the mesh into dense motion and synthesize high-quality videos frame by frame. Extensive experiments demonstrate that VividTalk can generate high-quality rap videos with lip synchronization and realistic enhancement, outperforming previous state-of-the-art works in both objective and subjective comparisons. The code for this technique will be publicly released after publication.

AI head image generation

138.3K

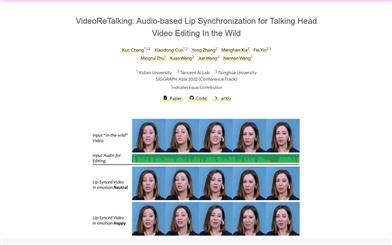

Videoretalking

VideoReTalking is a novel system that can edit real-world talking head videos to produce high-quality lip-sync output videos based on input audio, even with varying emotions. The system breaks down this goal into three consecutive tasks: (1) Generating facial videos with normalized expressions using an expression editing network; (2) Audio-driven lip-sync synchronization; (3) Facial enhancement to improve photorealism. Given a talking head video, we first use an expression editing network to modify the expressions of each frame according to a standardized expression template, resulting in a video with normalized expressions. This video is then input into a lip-sync network along with the given audio to generate a lip-sync video. Finally, we use an identity-aware facial enhancement network and post-processing to enhance the photorealism of the synthesized face. We utilize learning-based methods for all three steps, and all modules can be processed sequentially in a pipeline without any user intervention.

AI video editing

324.3K

Featured AI Tools

Chinese Picks

Nocode

NoCode 是一款无需编程经验的平台,允许用户通过自然语言描述创意并快速生成应用,旨在降低开发门槛,让更多人能实现他们的创意。该平台提供实时预览和一键部署功能,非常适合非技术背景的用户,帮助他们将想法转化为现实。

开发平台

143.8K

Fresh Picks

Listenhub

ListenHub 是一款轻量级的 AI 播客生成工具,支持中文和英语,基于前沿 AI 技术,能够快速生成用户感兴趣的播客内容。其主要优点包括自然对话和超真实人声效果,使得用户能够随时随地享受高品质的听觉体验。ListenHub 不仅提升了内容生成的速度,还兼容移动端,便于用户在不同场合使用。产品定位为高效的信息获取工具,适合广泛的听众需求。

音频生成

109.8K

English Picks

Lovart

Lovart 是一款革命性的 AI 设计代理,能够将创意提示转化为艺术作品,支持从故事板到品牌视觉的多种设计需求。其重要性在于打破传统设计流程,节省时间并提升创意灵感。Lovart 当前处于测试阶段,用户可加入等候名单,随时体验设计的乐趣。

AI设计工具

125.3K

Fastvlm

FastVLM 是一种高效的视觉编码模型,专为视觉语言模型设计。它通过创新的 FastViTHD 混合视觉编码器,减少了高分辨率图像的编码时间和输出的 token 数量,使得模型在速度和精度上表现出色。FastVLM 的主要定位是为开发者提供强大的视觉语言处理能力,适用于各种应用场景,尤其在需要快速响应的移动设备上表现优异。

AI模型

98.5K

English Picks

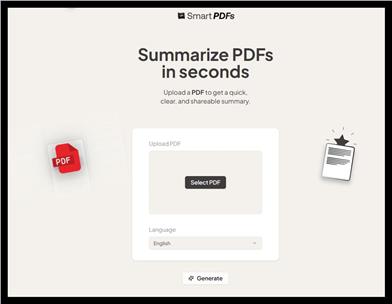

Smart PDFs

Smart PDFs 是一个在线工具,利用 AI 技术快速分析 PDF 文档,并生成简明扼要的总结。它适合需要快速获取文档要点的用户,如学生、研究人员和商务人士。该工具使用 Llama 3.3 模型,支持多种语言,是提高工作效率的理想选择,完全免费使用。

文章摘要

63.5K

Keysync

KeySync 是一个针对高分辨率视频的无泄漏唇同步框架。它解决了传统唇同步技术中的时间一致性问题,同时通过巧妙的遮罩策略处理表情泄漏和面部遮挡。KeySync 的优越性体现在其在唇重建和跨同步方面的先进成果,适用于自动配音等实际应用场景。

视频编辑

88.9K

Anyvoice

AnyVoice是一款领先的AI声音生成器,采用先进的深度学习模型,将文本转换为与人类无法区分的自然语音。其主要优点包括超真实的声音效果、多语言支持、快速生成能力以及语音定制功能。该产品适用于多种场景,如内容创作、教育、商业和娱乐制作等,旨在为用户提供高效、便捷的语音生成解决方案。目前产品提供免费试用,适合不同层次的用户。

音频生成

660.5K

Chinese Picks

Liblibai

LiblibAI是一个中国领先的AI创作平台,提供强大的AI创作能力,帮助创作者实现创意。平台提供海量免费AI创作模型,用户可以搜索使用模型进行图像、文字、音频等创作。平台还支持用户训练自己的AI模型。平台定位于广大创作者用户,致力于创造条件普惠,服务创意产业,让每个人都享有创作的乐趣。

AI模型

8.0M