# Video Analysis

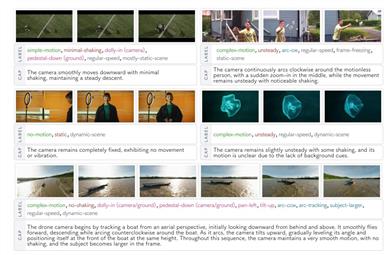

Camerabench

CameraBench is a model for analyzing camera motion in videos, aimed at understanding the motion patterns of cameras through video interpretation. Its main advantage lies in using generative visual language models for principle classification of camera motions and video-text retrieval. Compared with traditional Structure from Motion (SfM) and Simultaneous Localization and Mapping (SLAM) methods, this model shows significant advantages in capturing scene semantics. The model is open-source and suitable for use by researchers and developers, with more improved versions to be released later.

Research Tools

40.0K

Fresh Picks

Internvl3

InternVL3 is a multimodal large language model (MLLM) open-sourced by OpenGVLab, possessing superior multimodal perception and reasoning capabilities. This model series includes 7 sizes ranging from 1B to 78B parameters, capable of simultaneously processing various information types such as text, images, and videos, demonstrating excellent overall performance. InternVL3 excels in industrial image analysis and 3D visual perception, with its overall text performance even surpassing the Qwen2.5 series. The open-sourcing of this model provides strong support for multimodal application development and helps promote the application of multimodal technology in more fields.

AI Model

38.4K

Smolvlm2

SmolVLM2 is a lightweight video language model designed to generate related text descriptions or video highlights by analyzing video content. This model is efficient and has low resource consumption, making it suitable for running on various devices, including mobile devices and desktop clients. Its main advantages are the ability to quickly process video data and generate high-quality text output, providing strong technical support for video content creation, video analysis, and education. Developed by the Hugging Face team, it's positioned as an efficient, lightweight video processing tool and is currently in the experimental stage; users can try it for free.

Video Editing

72.6K

Internvl2 5 38B MPO

InternVL2.5-MPO is an advanced series of large multimodal language models built on InternVL2.5 and Mixed Preference Optimization (MPO). This series excels in multimodal tasks, capable of processing image, text, and video data while generating high-quality text responses. The model employs a 'ViT-MLP-LLM' paradigm, optimizing visual processing capabilities through pixel unshuffle operations and dynamic resolution strategies. Furthermore, it supports multiple images and video data, further expanding its application scenarios. In multimodal capability assessments, InternVL2.5-MPO surpasses numerous benchmark models, affirming its leadership in the multimodal field.

AI Model

60.2K

Valley Eagle 7B

Valley-Eagle-7B is a multimodal large model developed by ByteDance, designed to handle a variety of tasks involving text, image, and video data. The model has achieved top results in internal e-commerce and short video benchmark tests and has demonstrated outstanding performance in OpenCompass tests compared to models of similar scale. Valley-Eagle-7B incorporates a combination of LargeMLP and ConvAdapter to build its projector, and introduces a VisionEncoder to enhance performance in extreme scenarios.

AI Model

58.0K

Valley

Valley is a cutting-edge multimodal large model developed by ByteDance, capable of handling a variety of tasks involving text, image, and video data. The model achieved top results in internal e-commerce and short video benchmarking, outperforming other open-source models. In OpenCompass testing, it scored an average of 67.40 or higher, ranking second among models under 10 billion parameters. The Valley-Eagle version references Eagle and introduces a vision encoder that can flexibly adjust the number of tokens while operating in parallel with the original visual tokens, enhancing the model's performance in extreme scenarios.

AI Model

57.1K

Video Analyzer

The video-analyzer is a video analysis tool that integrates Llama's 11B visual model and OpenAI's Whisper model. It captures key frames, inputs them into the visual model for detail extraction, and combines insights from each frame with available transcription to describe events occurring in the video. This tool represents a fusion of computer vision, audio transcription, and natural language processing, capable of generating detailed descriptions of video content. Its key advantages include complete local operation without the need for cloud services or API keys, intelligent key frame extraction from videos, high-quality audio transcription using OpenAI's Whisper, frame analysis with Ollama and Llama3.2 11B visual model, and the ability to generate natural language descriptions of video content.

Video Editing

108.2K

Internvl2 5 38B

InternVL 2.5 is a series of multimodal large language models launched by OpenGVLab, featuring significant enhancements in training strategies, testing strategies, and data quality improvements over InternVL 2.0. This series can process image, text, and video data, demonstrating capabilities in multimodal understanding and generation, positioning it at the forefront of the multimodal AI field. The InternVL 2.5 series provides robust support for multimodal tasks with its high performance and open-source attributes.

AI Model

58.8K

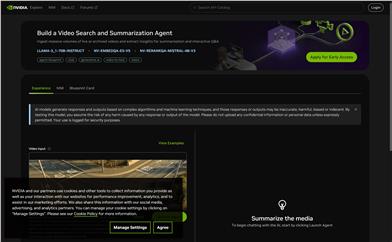

NVIDIA AI Blueprint

NVIDIA AI Blueprint for Video Search and Summarization is a reference workflow based on NVIDIA NIM microservices and generative AI models, designed to build visual AI agents capable of understanding natural language prompts and executing visual question answering. These agents can be deployed in various scenarios, such as factories, warehouses, retail stores, airports, and traffic intersections, assisting operations teams in making better decisions based on rich insights generated from natural interactions.

AI Model

52.7K

NVIDIA Video Search And Summarization

NVIDIA Video Search and Summarization is a model that utilizes deep learning and artificial intelligence technology to process large amounts of real-time or archived video, extracting information for summarization and interactive Q&A. This product represents the latest advancements in video content analysis and processing technology. By employing generative AI and video-to-text techniques, it provides users with a novel approach to video content management and retrieval. Key advantages of NVIDIA Video Search and Summarization include efficient video content analysis, accurate summary generation, and interactive Q&A capabilities, which are critical for enterprises dealing with vast quantities of video data. Background information indicates NVIDIA's commitment to advancing intelligent processing and analysis of video content through its cutting-edge AI models.

AI Search

66.5K

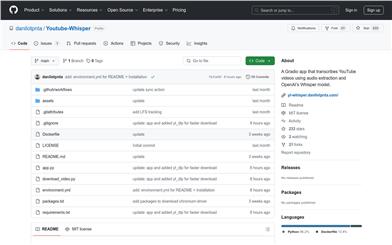

Youtube Whisper

Youtube-Whisper is a Gradio-based application that extracts audio from YouTube videos and transcribes it into text using OpenAI's Whisper model. This tool is highly beneficial for users needing to convert video content into text for analysis, archiving, or translation. It leverages cutting-edge artificial intelligence technology to enhance the accessibility and usability of video content.

AI speech-to-text

62.7K

Fresh Picks

Mylens.ai

MyLens.ai is a tool that utilizes artificial intelligence technology to help users deeply understand YouTube videos. It quickly reveals key information through visual summaries and insights, assisting users in identifying areas for improvement, thereby mastering the essence of each video.

Video Editing

58.8K

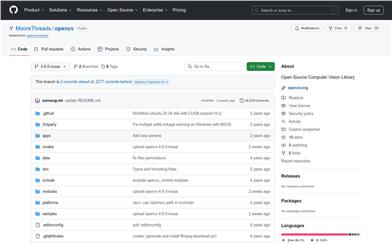

Open Source Computer Vision Library

OpenCV is a cross-platform open-source software library for computer vision and machine learning. It provides a wide range of programming features, including but not limited to image processing, video analysis, feature detection, and machine learning. The library is widely used in academic research and commercial projects, favored by developers for its powerful capabilities and flexibility.

AI image detection and recognition

51.6K

Doesvideocontain

doesVideoContain is a model that leverages artificial intelligence to detect video content directly in the browser. It allows users to automatically capture video screenshots and identify significant moments in videos through simple English sentences. This model operates entirely on the client side, preserving user privacy without the need for API fees, and can handle large local files without uploading them to the cloud. It utilizes Transformers.js and ONNX Runtime Web from the Web AI ecosystem, combining custom logic to perform cosine similarity calculations.

AI video editing

85.8K

Qwen2 VL

Qwen2-VL is the latest generation visual language model developed on the Qwen2 framework, featuring multilingual support and powerful visual comprehension capabilities. It can process images of varying resolutions and aspect ratios, understand long videos, and can be integrated into devices such as smartphones and robots for automation. It has achieved leading performances on multiple visual understanding benchmarks, particularly excelling in document comprehension.

AI Model

55.5K

Mplug Owl3

mPLUG-Owl3 is a multimodal large language model focused on understanding long image sequences. It can learn knowledge from retrieval systems, engage in alternating image-text dialogues with users, and watch long videos while remembering the details. The model's source code and weights have been released on HuggingFace, suitable for tasks like visual question answering, multimodal benchmark testing, and video benchmarking.

AI Model

52.2K

Llava OneVision

LLaVA-OneVision is a large multimodal model (LMM) collaboratively developed by ByteDance and several universities. It pushes the performance boundaries of open large multimodal models across single images, multiple images, and video scenarios. The model's design facilitates powerful transfer learning across different modalities/scenarios, showcasing new integrated capabilities, particularly in video understanding and cross-scenario abilities, demonstrated through task conversion from images to videos.

AI Model

73.1K

Fresh Picks

Labelu

LabelU is an open-source data labeling tool designed for efficient annotation of image, video, and audio data, aimed at improving the performance and quality of machine learning models. It supports various annotation types, including label classification, text description, and bounding box, to meet diverse labeling needs.

AI image detection and recognition

68.2K

Viral Insight

Viral Insight is an AI application that predicts the viral potential of video content. Users can upload video information and receive prediction results within seconds. This product is part of the Buildspace project, aimed at helping content creators understand the potential virality of their videos before release.

Video Production

76.7K

Videollama2 7B Base

VideoLLaMA2-7B-Base, developed by DAMO-NLP-SG, is a large video language model focused on understanding and generating video content. This model demonstrates exceptional performance in visual question answering and video captioning. Through advanced spatiotemporal modeling and audio understanding capabilities, it provides users with a new tool for analyzing video content. Based on the Transformer architecture, it can process multi-modal data, combining textual and visual information to generate accurate and insightful outputs.

AI video generation

76.5K

Chinese Picks

AI Class Representative

AI Class Representative is an intelligent plugin designed specifically for video learning. It utilizes advanced AI technology to provide users with functions such as video content summarization, knowledge Q&A, and subtitle search. Through precise AI analysis, it helps users quickly grasp the core information of a video, enhancing learning efficiency. The product is based on the abundance of online educational resources and the user demand for efficient learning tools. It is positioned to assist users in enhancing their learning experience on platforms like Bilibili.

Education

260.3K

Fresh Picks

MASA

MASA is an advanced model for object matching in video frames, capable of handling multi-object tracking (MOT) in complex scenes. Unlike models relying on specific domain-labeled video datasets, MASA learns instance-level correspondences through the rich object segmentation of the Segment Anything Model (SAM). MASA features a general-purpose adapter that can be used with base segmentation or detection models, enabling zero-shot tracking capabilities and outstanding performance even in complex domains.

AI video editing

64.0K

Video MME

Video-MME is a benchmark for evaluating the performance of Multi-Modal Large Language Models (MLLMs) in video analysis. It fills the gap in existing evaluation methods regarding the ability of MLLMs to process continuous visual data, providing researchers with a high-quality and comprehensive evaluation platform. The benchmark covers videos of different lengths and evaluates core MLLM capabilities.

AI video analysis

67.3K

SAM

SAM is an advanced video object segmentation model. It combines optical flow and RGB information to detect and segment moving objects in videos. The model has achieved significant performance improvements in both single-object and multi-object benchmark tests while maintaining object identity consistency.

Video Editing

53.8K

Kuasar Video

Kuasar Video is a product that provides companies with artificial intelligence-supported video solutions. It features social media video analyzers, video ratings, and video tag search functions, which help businesses to rate videos on social media, and identify the most effective video tags based on the rating results to carry out targeted content marketing. This product can significantly enhance the distribution effect of content for companies.

Video Editing

61.8K

Gaitanalyzer

Gaitanalyzer is a tool that analyzes gait at home, helping users understand their health status. By uploading short videos of left-to-right movement, users can conduct gait analysis and obtain detailed gait data and interpretations. The product implements an automatic gait analysis algorithm based on an unlabeled pose estimation model, enabling video analysis on a local computer. It provides pose labeling, distance, peak and minimum value plotting, along with gait data display and download. Furthermore, Gaitanalyzer utilizes the Llama2 large language model to explain gait patterns to users in simple terms. Users can access Gaitanalyzer at https://gaitanalyzer.health, where videos are stored on the server. Alternatively, users can run it locally using docker, storing videos on their computers.

Health

54.1K

Visionati

Visionati is a comprehensive visual analysis toolkit that provides comprehensive image and video description, tagging, and content filtering functionalities. Its integration with leading AI players like Google Vision, Amazon Rekognition, and OpenAI ensures exceptional accuracy and depth. These features can transform complex visual content into clear, actionable insights for applications in digital marketing, storytelling, and data analysis.

Data Analysis

70.7K

Yogger

Yogger is an advanced video analysis application that analyzes movement and gait, tracks progress, and conducts AI-based movement screenings. It can help athletes unlock their potential, prevent injuries, and achieve their personal best. The application provides advanced motion capture capabilities, allowing you to conduct motion analysis anytime, anywhere.

Sports Analysis

57.7K

Video Summarize

video_summarize is a GPT-powered intelligent video content summarization tool. It can automatically convert videos to text and then use GPT to generate video content summaries, helping users quickly understand the key points of a video.

AI video summarization

148.8K

Chinese Picks

Bibigpt

Writing Assistant

998.6K

- 1

- 2

Featured AI Tools

Flow AI

Flow is an AI-driven movie-making tool designed for creators, utilizing Google DeepMind's advanced models to allow users to easily create excellent movie clips, scenes, and stories. The tool provides a seamless creative experience, supporting user-defined assets or generating content within Flow. In terms of pricing, the Google AI Pro and Google AI Ultra plans offer different functionalities suitable for various user needs.

Video Production

45.0K

Nocode

NoCode is a platform that requires no programming experience, allowing users to quickly generate applications by describing their ideas in natural language, aiming to lower development barriers so more people can realize their ideas. The platform provides real-time previews and one-click deployment features, making it very suitable for non-technical users to turn their ideas into reality.

Development Platform

48.6K

Listenhub

ListenHub is a lightweight AI podcast generation tool that supports both Chinese and English. Based on cutting-edge AI technology, it can quickly generate podcast content of interest to users. Its main advantages include natural dialogue and ultra-realistic voice effects, allowing users to enjoy high-quality auditory experiences anytime and anywhere. ListenHub not only improves the speed of content generation but also offers compatibility with mobile devices, making it convenient for users to use in different settings. The product is positioned as an efficient information acquisition tool, suitable for the needs of a wide range of listeners.

AI

45.5K

Minimax Agent

MiniMax Agent is an intelligent AI companion that adopts the latest multimodal technology. The MCP multi-agent collaboration enables AI teams to efficiently solve complex problems. It provides features such as instant answers, visual analysis, and voice interaction, which can increase productivity by 10 times.

Multimodal technology

48.3K

Chinese Picks

Tencent Hunyuan Image 2.0

Tencent Hunyuan Image 2.0 is Tencent's latest released AI image generation model, significantly improving generation speed and image quality. With a super-high compression ratio codec and new diffusion architecture, image generation speed can reach milliseconds, avoiding the waiting time of traditional generation. At the same time, the model improves the realism and detail representation of images through the combination of reinforcement learning algorithms and human aesthetic knowledge, suitable for professional users such as designers and creators.

Image Generation

48.0K

Openmemory MCP

OpenMemory is an open-source personal memory layer that provides private, portable memory management for large language models (LLMs). It ensures users have full control over their data, maintaining its security when building AI applications. This project supports Docker, Python, and Node.js, making it suitable for developers seeking personalized AI experiences. OpenMemory is particularly suited for users who wish to use AI without revealing personal information.

open source

45.8K

Fastvlm

FastVLM is an efficient visual encoding model designed specifically for visual language models. It uses the innovative FastViTHD hybrid visual encoder to reduce the time required for encoding high-resolution images and the number of output tokens, resulting in excellent performance in both speed and accuracy. FastVLM is primarily positioned to provide developers with powerful visual language processing capabilities, applicable to various scenarios, particularly performing excellently on mobile devices that require rapid response.

Image Processing

42.5K

Chinese Picks

Liblibai

LiblibAI is a leading Chinese AI creative platform offering powerful AI creative tools to help creators bring their imagination to life. The platform provides a vast library of free AI creative models, allowing users to search and utilize these models for image, text, and audio creations. Users can also train their own AI models on the platform. Focused on the diverse needs of creators, LiblibAI is committed to creating inclusive conditions and serving the creative industry, ensuring that everyone can enjoy the joy of creation.

AI Model

6.9M