# Transformer Model

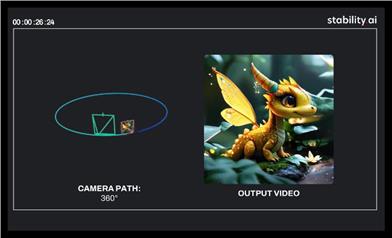

Stable Virtual Camera

Stable Virtual Camera is a 1.3B parameter general diffusion model developed by Stability AI, belonging to the Transformer image-to-video model. Its importance lies in providing technical support for novel view synthesis (NVS), capable of generating 3D-consistent new scene views based on input views and target cameras. The main advantages are the ability to freely specify the target camera trajectory, generate samples with large perspective changes that are smooth over time, maintain high consistency without additional neural radiance field (NeRF) distillation, and generate high-quality seamless loop videos up to half a minute long. The model is only freely available for research and non-commercial use, aiming to provide innovative image-to-video solutions for researchers and non-commercial creators.

Video Production

58.8K

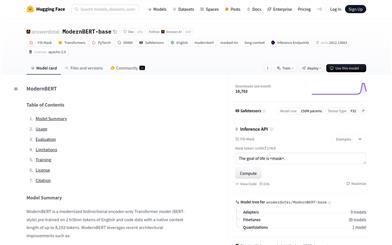

Modernbert Base

ModernBERT-base is a modern bidirectional encoder Transformer model pretrained on 2 trillion English and code samples, natively supporting up to 8192 tokens of context. The model incorporates cutting-edge architectural improvements such as Rotary Positional Embeddings (RoPE), Local-Global Alternating Attention, and Unpadding, showing exceptional performance on long-text processing tasks. It is ideal for processing long documents for tasks such as retrieval, classification, and semantic search within large corpuses. Since the training data is primarily in English and code, its performance may be reduced when handling other languages.

AI Model

52.2K

LLNL/LUAR

LLNL/LUAR is a Transformer-based model designed for learning author representations, primarily focused on cross-domain transfer research for author verification. Introduced in an EMNLP 2021 paper, it explores whether author representations learned in one domain can be transferred to another. Key advantages of the model include its ability to handle large datasets and facilitate zero-shot transfer across diverse domains such as Amazon reviews, fanfiction short stories, and Reddit comments. Background information includes innovative research in the field of cross-domain author verification and its potential applications in natural language processing. This product is open-source and follows the Apache-2.0 license, allowing for free use.

Research Equipment

46.9K

Ipadapter Instruct

IPAdapter-Instruct is an image generation model developed by Unity Technologies. It enhances a transformer model by adding extra text embedding conditions, allowing a single model to efficiently perform various image generation tasks. The primary advantage of this model lies in its ability to flexibly switch between different condition interpretations, such as style transfer and object extraction, using 'Instruct' prompts, while maintaining minimal quality loss compared to task-specific models.

AI image generation

64.0K

Genau

GenAU is an audio generation model developed by Snap Research. It leverages the AutoCap automatic captioning model and the GenAu audio generation architecture to significantly enhance audio quality. It excels in generating environmental sounds and effects, particularly in scenarios with limited data and subpar caption quality. The GenAU model is capable of producing high-quality audio and holds immense potential in the field of audio synthesis.

AI audio enhancer

51.9K

Videollama2 7B Base

VideoLLaMA2-7B-Base, developed by DAMO-NLP-SG, is a large video language model focused on understanding and generating video content. This model demonstrates exceptional performance in visual question answering and video captioning. Through advanced spatiotemporal modeling and audio understanding capabilities, it provides users with a new tool for analyzing video content. Based on the Transformer architecture, it can process multi-modal data, combining textual and visual information to generate accurate and insightful outputs.

AI video generation

77.0K

GRM

GRM is a large-scale reconstruction model that can recover 3D assets from sparse view images in 0.1 seconds and achieve generation in 8 seconds. It is a feed-forward Transformer-based model that can efficiently fuse multi-view information to convert input pixels into pixel-aligned Gaussian distributions. These Gaussian distributions can be back-projected into a dense 3D Gaussian distribution collection representing the scene. Our Transformer architecture and the use of 3D Gaussian distributions unlock a scalable and efficient reconstruction framework. Extensive experimental results demonstrate that our method surpasses other alternatives in terms of reconstruction quality and efficiency. We also showcase GRM's potential in generation tasks (such as text-to-3D and image-to-3D) by combining it with existing multi-view diffusion models.

AI image generation

60.7K

Featured AI Tools

Flow AI

Flow is an AI-driven movie-making tool designed for creators, utilizing Google DeepMind's advanced models to allow users to easily create excellent movie clips, scenes, and stories. The tool provides a seamless creative experience, supporting user-defined assets or generating content within Flow. In terms of pricing, the Google AI Pro and Google AI Ultra plans offer different functionalities suitable for various user needs.

Video Production

48.9K

Nocode

NoCode is a platform that requires no programming experience, allowing users to quickly generate applications by describing their ideas in natural language, aiming to lower development barriers so more people can realize their ideas. The platform provides real-time previews and one-click deployment features, making it very suitable for non-technical users to turn their ideas into reality.

Development Platform

53.0K

Listenhub

ListenHub is a lightweight AI podcast generation tool that supports both Chinese and English. Based on cutting-edge AI technology, it can quickly generate podcast content of interest to users. Its main advantages include natural dialogue and ultra-realistic voice effects, allowing users to enjoy high-quality auditory experiences anytime and anywhere. ListenHub not only improves the speed of content generation but also offers compatibility with mobile devices, making it convenient for users to use in different settings. The product is positioned as an efficient information acquisition tool, suitable for the needs of a wide range of listeners.

AI

48.0K

Minimax Agent

MiniMax Agent is an intelligent AI companion that adopts the latest multimodal technology. The MCP multi-agent collaboration enables AI teams to efficiently solve complex problems. It provides features such as instant answers, visual analysis, and voice interaction, which can increase productivity by 10 times.

Multimodal technology

53.8K

Chinese Picks

Tencent Hunyuan Image 2.0

Tencent Hunyuan Image 2.0 is Tencent's latest released AI image generation model, significantly improving generation speed and image quality. With a super-high compression ratio codec and new diffusion architecture, image generation speed can reach milliseconds, avoiding the waiting time of traditional generation. At the same time, the model improves the realism and detail representation of images through the combination of reinforcement learning algorithms and human aesthetic knowledge, suitable for professional users such as designers and creators.

Image Generation

51.9K

Openmemory MCP

OpenMemory is an open-source personal memory layer that provides private, portable memory management for large language models (LLMs). It ensures users have full control over their data, maintaining its security when building AI applications. This project supports Docker, Python, and Node.js, making it suitable for developers seeking personalized AI experiences. OpenMemory is particularly suited for users who wish to use AI without revealing personal information.

open source

48.9K

Fastvlm

FastVLM is an efficient visual encoding model designed specifically for visual language models. It uses the innovative FastViTHD hybrid visual encoder to reduce the time required for encoding high-resolution images and the number of output tokens, resulting in excellent performance in both speed and accuracy. FastVLM is primarily positioned to provide developers with powerful visual language processing capabilities, applicable to various scenarios, particularly performing excellently on mobile devices that require rapid response.

Image Processing

43.9K

Chinese Picks

Liblibai

LiblibAI is a leading Chinese AI creative platform offering powerful AI creative tools to help creators bring their imagination to life. The platform provides a vast library of free AI creative models, allowing users to search and utilize these models for image, text, and audio creations. Users can also train their own AI models on the platform. Focused on the diverse needs of creators, LiblibAI is committed to creating inclusive conditions and serving the creative industry, ensuring that everyone can enjoy the joy of creation.

AI Model

6.9M