# AI Inference

Fresh Picks

Deepseek V3/R1 Inference System

The DeepSeek-V3/R1 inference system is a high-performance inference architecture developed by the DeepSeek team, aiming to optimize the inference efficiency of large-scale sparse models. It significantly improves GPU matrix computation efficiency and reduces latency through cross-node expert parallelism (EP) technology. The system employs a double-batch overlapping strategy and a multi-level load balancing mechanism to ensure efficient operation in large-scale distributed environments. Its main advantages include high throughput, low latency, and optimized resource utilization, making it suitable for high-performance computing and AI inference scenarios.

Model Training and Deployment

55.2K

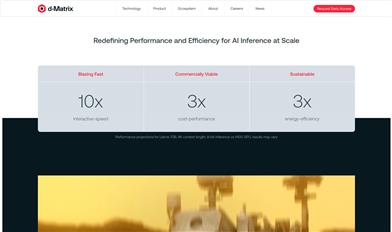

D Matrix

d-Matrix is a company focused on AI inference technology, with its flagship product Corsair? being an AI inference platform specifically designed for data centers that provides extremely high inference speed and low latency. Through hardware-software co-design, d-Matrix optimizes the performance of Generative AI inference, promoting the application of AI technology in data centers, making large-scale AI inference more efficient and sustainable.

Data Centers

46.4K

English Picks

Cerebras Inference

Cerebras Inference is an AI inference platform launched by Cerebras, offering speeds 20 times greater than GPUs at 1/5 the cost. It leverages Cerebras' high-performance computing technology to provide rapid, efficient inference services for large-scale language models, high-performance computing, and more. The platform supports a variety of AI models across industries such as healthcare, energy, government, and financial services, and features open-source capabilities that allow users to train their own foundational models or fine-tune existing open-source models.

Model Training and Deployment

54.6K

English Picks

Rakis

Rakis is a fully browser-based decentralized inference network. Leveraging blockchain technology, it allows nodes to request and share AI model inference results, enabling distributed execution of AI models without relying on servers. By utilizing browsers as nodes and supporting WebGPU compatible platforms, Rakis empowers ordinary users to participate in AI model inference. The project is open-source, emphasizing transparency and verifiability, aiming to address the challenges of determinism, scalability, and security in decentralized AI inference.

Development Platform

46.9K

Runpod

RunPod is a scalable cloud GPU infrastructure for training and inference. Rent cloud GPUs starting at $0.2 per hour, with support for TensorFlow, PyTorch, and other AI frameworks. We provide reliable cloud services, free bandwidth, multiple GPU options, server endpoints, and AI endpoints to suit various needs.

Development & Tools

65.7K

Featured AI Tools

Flow AI

Flow is an AI-driven movie-making tool designed for creators, utilizing Google DeepMind's advanced models to allow users to easily create excellent movie clips, scenes, and stories. The tool provides a seamless creative experience, supporting user-defined assets or generating content within Flow. In terms of pricing, the Google AI Pro and Google AI Ultra plans offer different functionalities suitable for various user needs.

Video Production

43.1K

Nocode

NoCode is a platform that requires no programming experience, allowing users to quickly generate applications by describing their ideas in natural language, aiming to lower development barriers so more people can realize their ideas. The platform provides real-time previews and one-click deployment features, making it very suitable for non-technical users to turn their ideas into reality.

Development Platform

46.4K

Listenhub

ListenHub is a lightweight AI podcast generation tool that supports both Chinese and English. Based on cutting-edge AI technology, it can quickly generate podcast content of interest to users. Its main advantages include natural dialogue and ultra-realistic voice effects, allowing users to enjoy high-quality auditory experiences anytime and anywhere. ListenHub not only improves the speed of content generation but also offers compatibility with mobile devices, making it convenient for users to use in different settings. The product is positioned as an efficient information acquisition tool, suitable for the needs of a wide range of listeners.

AI

43.9K

Minimax Agent

MiniMax Agent is an intelligent AI companion that adopts the latest multimodal technology. The MCP multi-agent collaboration enables AI teams to efficiently solve complex problems. It provides features such as instant answers, visual analysis, and voice interaction, which can increase productivity by 10 times.

Multimodal technology

45.3K

Chinese Picks

Tencent Hunyuan Image 2.0

Tencent Hunyuan Image 2.0 is Tencent's latest released AI image generation model, significantly improving generation speed and image quality. With a super-high compression ratio codec and new diffusion architecture, image generation speed can reach milliseconds, avoiding the waiting time of traditional generation. At the same time, the model improves the realism and detail representation of images through the combination of reinforcement learning algorithms and human aesthetic knowledge, suitable for professional users such as designers and creators.

Image Generation

44.7K

Openmemory MCP

OpenMemory is an open-source personal memory layer that provides private, portable memory management for large language models (LLMs). It ensures users have full control over their data, maintaining its security when building AI applications. This project supports Docker, Python, and Node.js, making it suitable for developers seeking personalized AI experiences. OpenMemory is particularly suited for users who wish to use AI without revealing personal information.

open source

44.2K

Fastvlm

FastVLM is an efficient visual encoding model designed specifically for visual language models. It uses the innovative FastViTHD hybrid visual encoder to reduce the time required for encoding high-resolution images and the number of output tokens, resulting in excellent performance in both speed and accuracy. FastVLM is primarily positioned to provide developers with powerful visual language processing capabilities, applicable to various scenarios, particularly performing excellently on mobile devices that require rapid response.

Image Processing

42.0K

Chinese Picks

Liblibai

LiblibAI is a leading Chinese AI creative platform offering powerful AI creative tools to help creators bring their imagination to life. The platform provides a vast library of free AI creative models, allowing users to search and utilize these models for image, text, and audio creations. Users can also train their own AI models on the platform. Focused on the diverse needs of creators, LiblibAI is committed to creating inclusive conditions and serving the creative industry, ensuring that everyone can enjoy the joy of creation.

AI Model

6.9M