Qwen2.5 1M

Overview :

Qwen2.5-1M is an open-source AI language model designed for long sequence tasks, with support for context lengths of up to 1 million tokens. Through innovative training methods and technical optimizations, it significantly enhances the performance and efficiency of long sequence processing. This model excels in long context tasks while maintaining strong performance in short text scenarios, making it an excellent open-source alternative among existing long context models. It is suitable for applications involving extensive text data, such as document analysis and information retrieval, providing developers with robust language processing capabilities.

Target Users :

This product is ideal for developers, researchers, and enterprises that handle long text data, particularly in fields such as natural language processing, text analysis, and information retrieval. It enables users to efficiently process large-scale text data, enhancing work productivity and model performance.

Use Cases

In long context tasks, such as needle-in-a-haystack scenarios, the model can accurately retrieve hidden information from 1 million token documents.

Demonstrates outstanding performance in complex long context understanding tasks like RULER, LV-Eval, and LongbenchChat.

Consistently outperforms GPT-4o-mini across multiple datasets, while handling eight times the context length.

Features

Supports a maximum context length of 1 million tokens, ideal for long sequence processing tasks.

Open-source model available in 7B and 14B versions for easy developer access.

Inference framework based on vLLM, integrating sparse attention methods, resulting in 3-7x speedup in inference.

Technical reports share insights on training and inference framework designs and results from ablation studies.

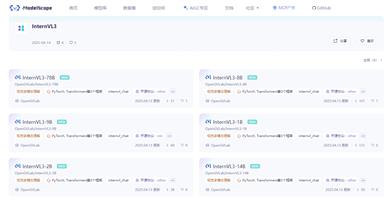

Online demos available on Hugging Face and Modelscope to experience the model's performance.

How to Use

1. Meet system requirements: Use a GPU with an optimized kernel, either from the Ampere or Hopper architecture, with CUDA version 12.1 or 12.3, and Python version >= 3.9 and <= 3.12.

2. Clone the vLLM repository and install the dependencies, cloning from a custom branch if needed and performing a manual installation.

3. Launch an OpenAI-compatible API service, configuring parameters based on your hardware setup, such as the number of GPUs and maximum input sequence length.

4. Interact with the model: Use Curl or Python code to send requests to the API and retrieve the model's response.

Featured AI Tools

Gemini

Gemini is the latest generation of AI system developed by Google DeepMind. It excels in multimodal reasoning, enabling seamless interaction between text, images, videos, audio, and code. Gemini surpasses previous models in language understanding, reasoning, mathematics, programming, and other fields, becoming one of the most powerful AI systems to date. It comes in three different scales to meet various needs from edge computing to cloud computing. Gemini can be widely applied in creative design, writing assistance, question answering, code generation, and more.

AI Model

11.4M

Chinese Picks

Liblibai

LiblibAI is a leading Chinese AI creative platform offering powerful AI creative tools to help creators bring their imagination to life. The platform provides a vast library of free AI creative models, allowing users to search and utilize these models for image, text, and audio creations. Users can also train their own AI models on the platform. Focused on the diverse needs of creators, LiblibAI is committed to creating inclusive conditions and serving the creative industry, ensuring that everyone can enjoy the joy of creation.

AI Model

6.9M