Deepseek VL2 Tiny

Overview :

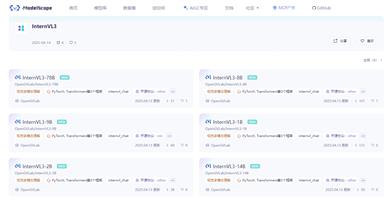

DeepSeek-VL2 is a series of advanced large-scale Mixture of Experts (MoE) visual language models, significantly improved compared to its predecessor, DeepSeek-VL. This model series demonstrates exceptional capabilities across various tasks, including visual question answering, optical character recognition, document/table/chart understanding, and visual localization. The DeepSeek-VL2 series consists of three variants: DeepSeek-VL2-Tiny, DeepSeek-VL2-Small, and DeepSeek-VL2, with 1.0B, 2.8B, and 4.5B active parameters, respectively. DeepSeek-VL2 achieves competitive or state-of-the-art performance compared to existing open-source dense and MoE-based models with similar or fewer active parameters.

Target Users :

The target audience includes enterprises and research institutions requiring image understanding and visual language processing, such as autonomous vehicle companies, security surveillance firms, and smart assistant developers. These users can leverage DeepSeek-VL2 for in-depth analysis and understanding of image content, enhancing their products' visual recognition and interaction capabilities.

Use Cases

In the retail industry, use DeepSeek-VL2 to analyze surveillance videos and identify customer behavior patterns.

In the educational field, utilize DeepSeek-VL2 to interpret images in textbooks, providing an interactive learning experience.

In medical image analysis, employ DeepSeek-VL2 to identify and classify pathological features in medical images.

Features

Visual Question Answering: Capable of understanding and answering questions related to images.

Optical Character Recognition: Identifies textual information in images.

Document/Table/Chart Understanding: Parses and comprehends content in documents, tables, and charts within images.

Visual Localization: Identifies specific objects or elements within images.

Multimodal Understanding: Integrates visual and linguistic information to provide deeper content understanding.

Model Variants: Offers models of various sizes to fit different application scenarios and computational resources.

Commercial Use Support: The DeepSeek-VL2 series is suitable for commercial applications.

How to Use

1. Install necessary dependencies: In a Python environment (version >= 3.8), run `pip install -e .` to install the dependencies.

2. Import essential libraries: Import the torch, transformers libraries, and modules related to DeepSeek-VL2.

3. Specify model path: Set the model path to `deepseek-ai/deepseek-vl2-small`.

4. Load the model and processor: Use DeepseekVLV2Processor and AutoModelForCausalLM to load the model from the specified path.

5. Prepare input data: Load and prepare the dialogue content and images for input.

6. Run the model to obtain responses: Use the model's generate method to produce responses based on the input embeddings and attention masks.

7. Decode and output results: Decode the encoded output from the model and print the results.

Featured AI Tools

Gemini

Gemini is the latest generation of AI system developed by Google DeepMind. It excels in multimodal reasoning, enabling seamless interaction between text, images, videos, audio, and code. Gemini surpasses previous models in language understanding, reasoning, mathematics, programming, and other fields, becoming one of the most powerful AI systems to date. It comes in three different scales to meet various needs from edge computing to cloud computing. Gemini can be widely applied in creative design, writing assistance, question answering, code generation, and more.

AI Model

11.4M

Chinese Picks

Liblibai

LiblibAI is a leading Chinese AI creative platform offering powerful AI creative tools to help creators bring their imagination to life. The platform provides a vast library of free AI creative models, allowing users to search and utilize these models for image, text, and audio creations. Users can also train their own AI models on the platform. Focused on the diverse needs of creators, LiblibAI is committed to creating inclusive conditions and serving the creative industry, ensuring that everyone can enjoy the joy of creation.

AI Model

6.9M