Deepseek V2.5 1210

Overview :

DeepSeek-V2.5-1210 is an upgraded version of DeepSeek-V2.5, improving multiple capabilities including mathematics, coding, and writing inference. The model's performance in the MATH-500 benchmark has increased from 74.8% to 82.8%, while its accuracy in the LiveCodebench (08.01 - 12.01) benchmark has risen from 29.2% to 34.38%. Additionally, the new version enhances user experience in file uploads and web summarization features. The DeepSeek-V2 series (including basics and chat capabilities) supports commercial use.

Target Users :

Target audience includes developers, data scientists, and professionals who require complex programming and mathematical calculations. DeepSeek-V2.5-1210 is particularly suited for professionals who need to handle large amounts of data and complex algorithms due to its high performance in programming and mathematical problem-solving.

Use Cases

Generate C++ quicksort code using DeepSeek-V2.5-1210.

Utilize the model for solving and verifying mathematical problems.

Summarize web content through the model to extract key information.

Features

Performance Enhancement: Significant improvements in mathematics, coding, and writing inference.

User Experience Optimization: Enhanced user interaction for file uploads and web summarization features.

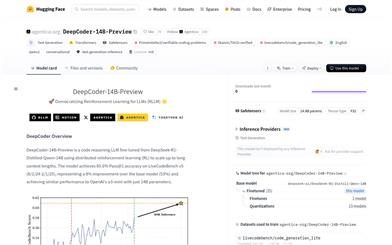

Model Inference: Supports model inference using Hugging Face's Transformers.

vLLM Support: vLLM is recommended for model inference, specific Pull Requests need to be merged.

Function Calling: The model can call external tools to enhance its capabilities.

JSON Output Mode: Ensures the model produces valid JSON objects.

FIM Completion: Provides prefixes and optional suffixes, the model will complete the intermediate content.

How to Use

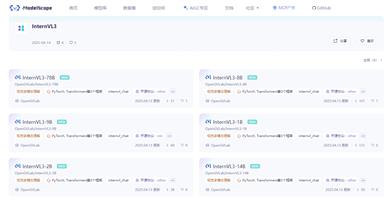

1. Visit the Hugging Face website and search for the DeepSeek-V2.5-1210 model.

2. Select the appropriate inference method based on your needs: use Hugging Face's Transformers or vLLM.

3. If using vLLM, merge the provided Pull Request into the vLLM codebase beforehand.

4. Prepare your input data, which can include programming problems, mathematical queries, or any content for inference.

5. Construct your input according to the model's API documentation and invoke the model for inference.

6. Retrieve the model output and perform any necessary post-processing, such as parsing JSON outputs or continuing with additional FIM tasks.

7. Conduct further analysis or applications based on the output results.

Featured AI Tools

Gemini

Gemini is the latest generation of AI system developed by Google DeepMind. It excels in multimodal reasoning, enabling seamless interaction between text, images, videos, audio, and code. Gemini surpasses previous models in language understanding, reasoning, mathematics, programming, and other fields, becoming one of the most powerful AI systems to date. It comes in three different scales to meet various needs from edge computing to cloud computing. Gemini can be widely applied in creative design, writing assistance, question answering, code generation, and more.

AI Model

11.4M

Chinese Picks

Liblibai

LiblibAI is a leading Chinese AI creative platform offering powerful AI creative tools to help creators bring their imagination to life. The platform provides a vast library of free AI creative models, allowing users to search and utilize these models for image, text, and audio creations. Users can also train their own AI models on the platform. Focused on the diverse needs of creators, LiblibAI is committed to creating inclusive conditions and serving the creative industry, ensuring that everyone can enjoy the joy of creation.

AI Model

6.9M